Chapter 1: The Orthodox Stack (2003–2015): Glory and Limits

1.1 Why begin in 2003

In 2015, the world's best humanoid robots fell over at the DARPA Robotics Challenge finals — in a competition designed to showcase them. Every one of those robots was running software descended from a beautiful idea that turned out to be too fragile: that if you model the robot precisely enough and solve a fast-enough optimization, balance will follow. This book is the story of what replaced that idea.

Before we can explain the replacement, we have to explain what was being replaced. The pre-2015 humanoid stack was not a collection of ad-hoc tricks. It was a coherent control paradigm with a clear intellectual lineage: a low-dimensional template for balance, an explicit ground-contact feasibility condition, a hierarchical whole-body optimizer, and a planning layer that reasoned over discrete footsteps. Each piece was a principled answer to a well-posed question. The paradigm's achievements were real — Honda's ASIMO ascended staircases a decade before the iPhone shipped, and Japan's HRP series demonstrated full whole-body motion in research laboratories from Tsukuba to Paris. What the paradigm lacked was a mechanism to absorb the kinds of surprise — slippery surfaces, unexpected contact, sensor noise, communication latency — that any open-world deployment inflicts. The stack worked when the model was right. When the model was wrong, it broke in ways that were both spectacular and structural.

Understanding how it broke is a prerequisite for understanding why the four catalysts of Parts II and III — Quasi-Direct-Drive (QDD) actuators, GPU-parallel simulation, teacher-student reinforcement learning, and the sim-to-real correction toolkit — were each necessary, and why none of them would have been sufficient on its own. This chapter is a compressed, honest look at the orthodox stack at its peak. The chapter that follows (Chapter 2) then separates what deserves to be discarded from what will survive into the modern System 0/1/2 architecture.

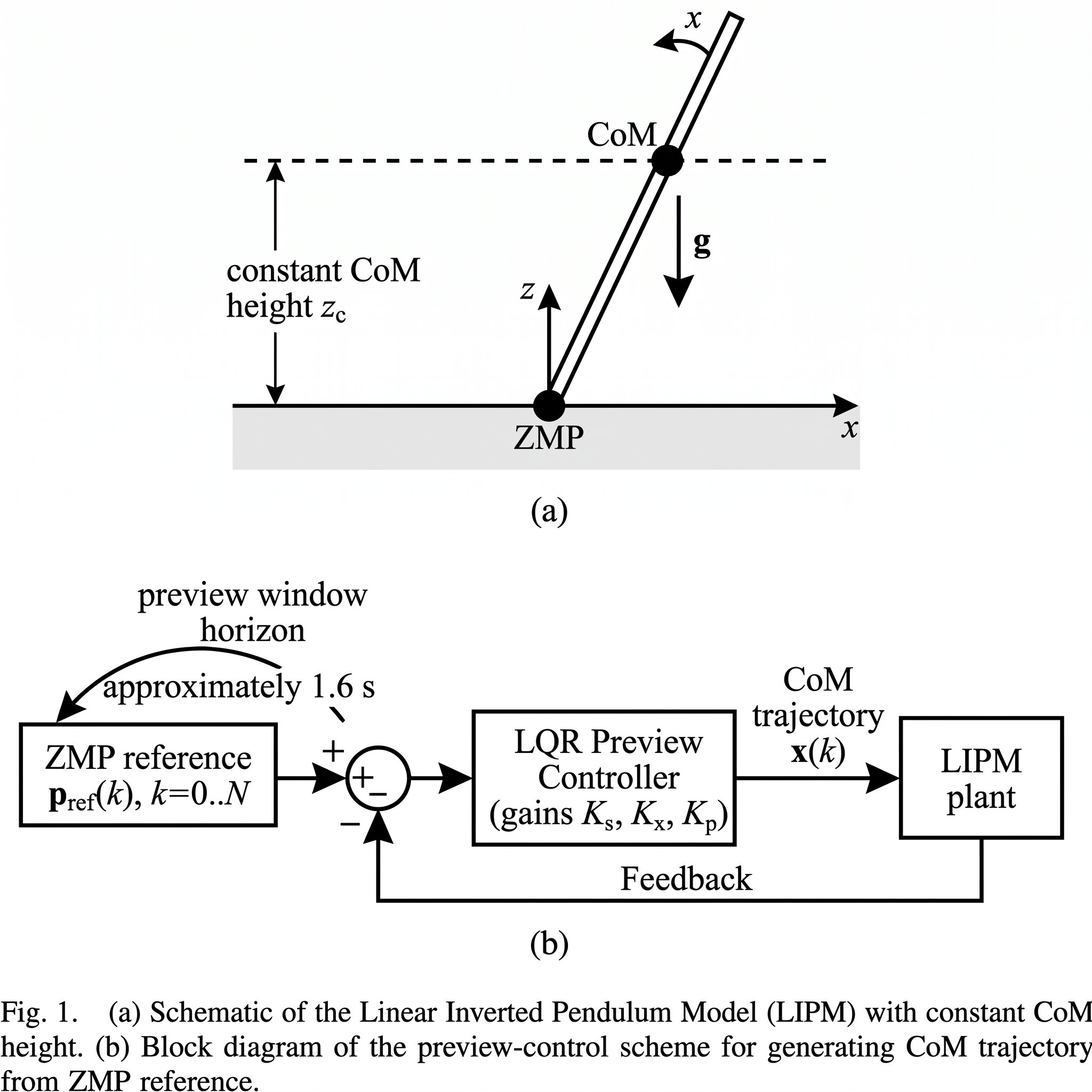

1.2 The Linear Inverted Pendulum Model

The central abstraction of 2003–2015 humanoid control is the Linear Inverted Pendulum Model (LIPM). The robot is modeled as a point mass — the center of mass (CoM) — supported by a massless, length-variable leg in contact with the ground. If the CoM height is held constant, the horizontal dynamics decouple from the vertical dynamics and become linear. That linearization is the reason LIPM is useful: a linear dynamical system admits closed-form analysis, Lyapunov certificates, and (as we will see) linear-quadratic optimal control.

Kajita and colleagues formalized the canonical planning recipe in the paper that still opens every modern humanoid controls syllabus: biped walking pattern generation by using preview control of zero-moment point [1]. Given a reference trajectory for the Zero-Moment Point (ZMP) — the point on the ground at which the net ground-reaction moment is zero, and therefore the point that must lie inside the robot's support polygon for balance to hold — the controller computes a CoM trajectory that tracks that ZMP reference through a preview-control window. The scheme is elegant. It is also carefully simulated: the original paper demonstrates the approach in simulation using HRP-2P model parameters (154 cm tall, 58 kg), and reports that a preview window of approximately 1.6 s (roughly one-and-a-half future footsteps) is required to keep ZMP tracking error bounded — shorter windows such as 0.8 s produce visible over- and under-shooting [1]. A control loop at 200 Hz (5 ms sampling) closes the tracking. Hardware validation on the physical HRP-2P was proposed as next-step work, not reported in the 2003 paper.

Four assumptions buy the elegance. First, the CoM height is held constant — this is the linearizing assumption, and it fails whenever the robot must squat, jump, or land hard. Second, the ZMP reference is planned offline with exact knowledge of the intended footstep sequence. Third, the contact between foot and ground is assumed to be unilateral and rigid, with friction sufficient to prevent slip. Fourth, the system's internal model — masses, link lengths, inertia matrices — is assumed to be accurate. Each assumption is individually reasonable. Their conjunction is what fails in the open world.

[1] Figs. 2–3 (LIPM / cart-table model) and Fig. 4 (preview-control block) (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch01_kajita2003_fig1.png'" style="cursor:zoom-in">

[1] Figs. 2–3 (LIPM / cart-table model) and Fig. 4 (preview-control block) (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch01_kajita2003_fig1.png'" style="cursor:zoom-in">

1.3 The Zero-Moment Point and the support polygon

The ZMP concept, introduced by Miomir Vukobratović in 1968 and formalized across the subsequent decades, is the workhorse of orthodox humanoid balance. The ZMP is the point at which the tangential ground-reaction moment vanishes; equivalently, it is the point about which the inertial and gravitational forces acting on the robot produce no tipping moment. The balance certificate is then simple to state: if the ZMP lies strictly within the support polygon defined by the feet in contact with the ground, the robot is dynamically balanced. If the ZMP reaches the edge of the support polygon, the robot will tip.

This single inequality — ZMP in support polygon — is the reason LIPM-based walking works at all. The planner's job becomes the generation of a ZMP reference trajectory that stays inside the polygon across all phases of walking, including the transfer of support from one foot to the other. The preview controller then follows that trajectory through the robot's CoM. Viewed abstractly, the orthodox stack converts a high-dimensional balance problem into a one-dimensional trajectory-tracking problem constrained by a two-dimensional polygonal feasibility region. That conversion is what makes the orthodox stack computationally tractable in 2003.

The ZMP criterion has two costs, both of which become important in Chapter 6. It is only valid under the constant-CoM-height assumption — if the robot accepts vertical CoM motion, the ZMP stops being the tipping-feasibility certificate, and one must fall back to more general conditions such as the centroidal moment pivot or full contact-wrench feasibility. And it assumes the contact is unilateral and rigid, which is a better description of a wooden floor than of the foam-backed carpets and hydraulic-oil-slicked catwalks of the 2015 DARPA finals.

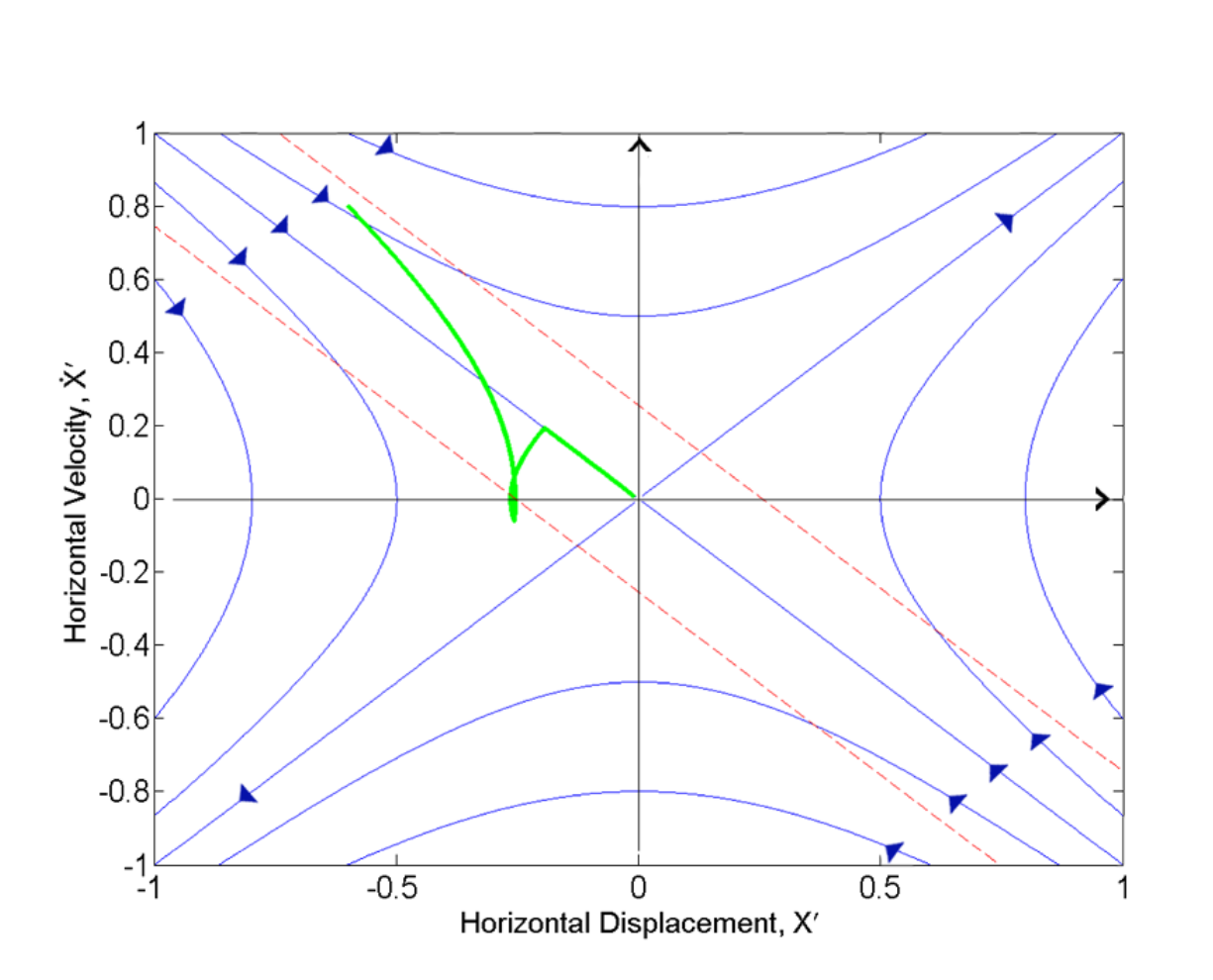

1.4 The capture point and reactive stepping

The ZMP criterion tells the robot whether it is currently balanced. It does not tell the robot what to do when it is not balanced. For that, Jerry Pratt and colleagues at IHMC introduced the capture point (CP) in 2006 [2]: the point on the ground at which, if the robot steps, it would be able to come to rest with a single step. The capture point is derived directly from the LIPM's orbital energy — there is a closed-form expression in terms of the CoM position, CoM velocity, and LIPM natural frequency — and its N-step generalization (the N-step capture region) defines the set of ground points from which the robot can rest within N steps.

Reactive stepping was a genuine advance. The 2006 paper validates the concept in simulation on a planar bipedal model with a flywheel body (mass 25 kg, inertia 1.225 kg·m², CoM height 0.9375 m, peak ankle torque 100 Nm), recovering from an impulsive push that imparts 0.2 m/s forward velocity via an angular-momentum lunge [2]. Hardware validation on the M2V2 bipedal humanoid followed in Pratt, Koolen, and colleagues' 2012 IJRR paper [3]. This is the lineage that, a decade after the 2006 formulation, produces Boston Dynamics's viral videos of Atlas recovering from a shove with an improvised backward step. The intuition — step toward the capture point, not the foot's pre-planned target — is the orthodox stack's best answer to disturbances in the wild.

But reactive stepping inherits LIPM's assumptions. Once the CoM excursion is large enough to violate constant-height, the closed-form CP expression no longer describes where the robot will actually be. Step commitments take finite time; during the swing phase of a commanded step, the LIPM approximation continues to drift from reality. And CP is computed from a state estimate — a filtered combination of IMU readings, joint encoders, and sometimes vision — which, in the DRC environment of dust, cable drag, and communication latency, was frequently wrong. The 2006 paper itself catalogs these limitations; the point for our narrative is that the orthodox stack's best reactive primitive is still a local linearization of a nonlinear world.

[2] Fig. 3." loading="lazy" onerror="this.src='../assets/figures/ch01_pratt2006_fig3.png'" style="cursor:zoom-in">

[2] Fig. 3." loading="lazy" onerror="this.src='../assets/figures/ch01_pratt2006_fig3.png'" style="cursor:zoom-in">

1.5 The whole-body inverse-dynamics QP

By 2010, the community had converged on a whole-body control architecture with three distinct layers. At the top, a task planner produces goals at human timescale: walk to that valve, turn it a quarter-turn, step over that doorframe. In the middle, a trajectory generator — often LIPM-based preview control for locomotion, operational-space inverse kinematics for manipulation — produces task-space trajectories. At the bottom, a whole-body inverse-dynamics quadratic program (QP) computes joint torques at 1 kHz that realize those trajectories while respecting friction cones, joint limits, torque limits, and centroidal-dynamics constraints.

The CMU group around Christopher Atkeson was among those who pushed this architecture to its mature form for the DARPA Robotics Challenge. Feng et al.'s paper in the Journal of Field Robotics is the canonical write-up of that maturity: the whole-body QP on the hydraulic Atlas platform ran at approximately 1 kHz with roughly 30 ms of planning latency, and the paper reports qualitative performance on the DRC task suite (door opening, valve turning, power-tool use) alongside the brittleness that inflected the DRC narrative — multiple falls during dynamic tasks at DRC 2015 [4]. The authors themselves list the limitations that the next decade would spend replacing: brittleness under contact surprises, dependence on accurate dynamic and frictional models, prohibitive per-scenario engineering burden, and the failure of planning–control decoupling under large disturbance.

The QP is a beautiful object. Given a stack of task priorities, it solves for joint torques that minimize a weighted sum of task-tracking errors subject to hard constraints. Friction cones are linearized into polyhedral approximations and enter as linear inequalities. Joint limits and torque limits are linear box constraints. The centroidal-dynamics constraint — total wrench on the robot must be consistent with measured ground-reaction forces — is linear in the decision variables once the task trajectory has specified the desired centroidal wrench. The whole problem is convex, and modern interior-point solvers dispatch it in well under a millisecond on commodity hardware.

The QP's failure mode is not computational. It is semantic. When the robot's foot slips on an unmodeled oil patch, the QP solves for the joint torques that best satisfy its internal model — but its internal model no longer describes the robot. When the hydraulic actuator saturates because a cable got caught, the QP issues commands the hardware cannot execute. When the time between commanding a torque and seeing its effect in the sensor stream jitters — because of operating-system scheduling, communication bus contention, or hydraulic valve latency — the torque command is issued against a state estimate that has already expired. Each failure is a specific violation of a specific assumption. The orthodox stack's engineering burden, in practice, was the burden of auditing and hardening every one of these assumptions, scenario by scenario.

1.6 Footstep planning and the planning-control split

One more piece completes the orthodox architecture: the footstep planner. Given a terrain map and a desired goal, the planner chooses a sequence of discrete foot placements that are kinematically reachable, dynamically feasible (via LIPM/CP reasoning), collision-free with respect to obstacles, and compatible with the robot's balance constraints. Footstep planning through the 2010s was solved primarily with search — A\* over a discretized reachable set, or mixed-integer optimization over a polytope decomposition of the terrain. The canonical reference implementations (Kuindersma, Deits, Fallon, Ratliff, Righetti, and others across multiple labs) produced plans in a few hundred milliseconds for the DRC terrain.

The footstep planner and the whole-body QP are separated. The planner chooses where each foot will land; the QP realizes that plan through joint torques. This separation is intellectually clean — a higher-level planner deals with combinatorial decisions, a lower-level controller deals with continuous dynamics — and it is how every orthodox humanoid stack of the period is organized.

This separation is the orthodox paradigm's deepest structural weakness. When a large external disturbance arrives — a push, a slip, a trip — the footstep plan that was current becomes invalid, but the QP beneath it continues to execute against the old plan until a new plan arrives. In Boston Dynamics demo videos from 2013–2014, one can watch the planner's stale instructions colliding with the real robot's state, producing the pathognomonic "stutter step" as the controller tries to realize a plan that no longer matches reality. The fix at the time was to run the planner faster and re-plan more aggressively. That mitigated but did not cure the issue: the fundamental problem is that planning is discrete, disturbance is continuous, and the two live in different time scales.

The post-2019 learning-based paradigm dissolves this split entirely. Instead of planning footsteps and then executing them, the learned policy emits a joint-action at every control tick, and footstep-like behavior is emergent from the policy's response to the state history. We return to this point in Chapter 6 and develop it formally in Chapter 7.

1.7 The robot lineage: ASIMO, HRP, Atlas

Three families of robots defined the orthodox era. Honda's ASIMO, descended from the P2 prototype of 1996, was the public face of humanoid research for nearly two decades; it demonstrated stair climbing and object handoff at speeds that, in the early 2000s, looked like science fiction. Japan's HRP series (HRP-2, HRP-3, HRP-4) — developed by AIST together with Kawada Industries and the research groups around Kajita — became the platform of choice for academic humanoid research, and the testbed for the preview-control walking scheme we examined in Section 1.2 [1]. Boston Dynamics's Atlas — initially a hydraulic platform commissioned for the DARPA Robotics Challenge — pushed the same paradigm into far more dynamic territory, with power densities and joint speeds that no previous humanoid had matched.

These robots achieved real capability. ASIMO's staircase work is the canonical demonstration that LIPM-based control, executed with sufficient engineering rigor on well-characterized hardware, can yield visually convincing humanoid locomotion. The DRC-era Atlas robots, in the hands of well-funded teams — IHMC, MIT, WPI-CMU, KAIST's DRC-HUBO team among them — completed multi-step manipulation tasks (door, valve, drill, wall breach) in front of judges and television cameras. These were not failures. They were the orthodox stack at its peak capability.

What the DRC also exposed was the paradigm's ceiling. Between task attempts, the robots moved slowly and deliberately. Disturbances — a cable snag, a door that opened stiffer than modeled, a platform that shifted under a foot — produced falls that were not rare and not recoverable. The falls were not evidence that any particular team was sloppy. They were evidence that the orthodox paradigm's handling of modeling error was structurally inadequate: it treated error as a perturbation to be compensated, rather than as the central fact about the environment.

1.8 Why the stack broke: a structural audit

Five distinct failure modes appeared repeatedly across the DRC and its predecessors, and each corresponds to an assumption we have already named.

Model error compounds over time. The whole-body QP is only as good as the rigid-body dynamics model it encodes. Real humanoids have harmonic-drive backlash, cable stretch, joint-frictional asymmetry, and thermally varying motor constants. Each is a few percent; their aggregate effect, compounded over a multi-second dynamic task, is a several-centimeter mismatch between where the model says the CoM is and where it actually is — enough to push the ZMP out of the support polygon in a worst-case scenario. The orthodox remedy was identification: measure every parameter, update the model. This worked in the lab. It did not generalize to the field.

Contact is not smooth. LIPM's validity depends on unilateral, rigid, high-friction contact with the ground. Real contact involves impact transients (a foot's strike event on a hard surface excites high-frequency vibrations that no 200 Hz controller can observe), tangential micro-slip (friction is only a statistical average over contact-patch asperities), and occasionally soft contact (mats, carpets, sand). Each violates LIPM in a distinct way, and the failures compose: on a lightly rolled DRC platform with a carpet runner, the LIPM validity region is a shadow of its laboratory version.

Sensor noise and communication latency poison state estimation. The QP acts on a state estimate, not on the state. State estimation fuses IMU, encoders, and sometimes vision via filters (extended Kalman, complementary, invariant-EKF in the best implementations). Each source has its own noise spectrum and latency. Under the DRC's communication-constrained conditions — intentionally limited bandwidth and enforced latency between robot and operator — state estimates drifted, and the QP's solutions began to act on a fictional robot.

The planning–control split fails under large disturbance. We discussed this in Section 1.6. A disturbance that invalidates the current footstep plan must be detected, propagated to the planner, replanned, and the new plan dispatched to the QP — all before the controller commits to the next contact event. When the time budget exceeds the available reaction window, the robot commits to a plan it should have abandoned.

Every improvement is a new scenario. Because the orthodox stack is prescriptive, every new operating condition is a new engineering problem. A different floor surface requires new friction coefficients; a different payload requires a new inertia estimate; a different lighting condition requires new vision calibration. The engineering labor scales with the deployment environment's complexity, and that scaling relationship is unfavorable.

None of these is a bug. Each is a feature of the paradigm's intellectual commitments: prescribe the model, solve the optimization, prove the correctness. The paradigm's replacement — which Part II introduces catalyst-by-catalyst — drops the first commitment and derives correctness from statistical coverage of a distribution rather than proof about a specific model. That is the regime change. Chapter 3 will describe the transition at the architecture level.

1.9 What the DRC left behind

The DARPA Robotics Challenge ended in June 2015. Its immediate aftermath was a conspicuous public retrenchment: industry funding for orthodox humanoid research collapsed, the hydraulic Atlas generation reached its demonstrated performance ceiling, and several DRC-era platforms (including KAIST's DRC-HUBO and the NASA Valkyrie in its original configuration) were retired or substantially redesigned. The field entered what, in retrospect, was the orthodox paradigm's quiet period: publicly visible demos slowed, but the research that became Parts II and III of this book was already underway in the labs that would define the 2019–2024 canon — MIT (Cheetah), ETH (ANYmal and Hutter-group work), Berkeley (Malik/Sreenath/Darrell), Oregon State (Hurst, Cassie), AIST (continuing Kajita tradition), and Boston Dynamics itself.

What did not collapse was the orthodox paradigm's intellectual contribution. LIPM is still a useful low-dimensional template for reward design in RL; the ZMP inequality is still a certificate that learned controllers often implicitly respect; the whole-body QP survives inside every modern humanoid as the System 0 torque-level fallback and as the warm-start for sampling-based model-predictive control. Chapter 2 treats this legacy carefully: not as nostalgia, but as a working inventory of primitives that will re-appear, repurposed, under the new architecture.

The pattern should be familiar to anyone who has watched a technical paradigm shift: the new approach does not annihilate the old one, it absorbs and re-frames its useful components. The orthodox stack's claim to describe the whole of humanoid control is what 2015–2026 falsified. Its claim to describe part of humanoid control — the part closest to the joint, the part that runs at 1 kHz, the part where the physics is tractable and the guarantees are provable — remains intact. That partial claim is the subject of Chapter 2.

1.10 Open questions

Three questions close this chapter and open the next. First, which parts of the orthodox stack are worth carrying forward? The whole-body QP's mathematical structure is clearly useful; the LIPM's one-dimensional template for balance is clearly useful; capture-point reasoning as a safety certificate is probably useful. But footstep planning as a separate-and-discrete reasoning layer is, by 2024, largely dissolved into the learned policy's emission of per-tick joint commands. Chapter 2 separates survivors from casualties.

Second, how did it become credible that learned policies would do better? The transition was not inevitable. In 2015, "use deep learning for humanoid balance" was not a credible research program. By 2019, it was. Three or four technical developments — each rigorously demonstrated on hardware by a small handful of groups — made the difference, and the rest of Part II is the account of how. Chapter 3 maps the inter-dependencies.

Third, was the orthodox paradigm wrong, or was it merely insufficient? The distinction matters, because "wrong" implies the field should discard its lessons, while "insufficient" implies the field should layer something new above the foundation. This book's position is the second: the orthodox stack was insufficient, not wrong, and the modern System 0/1/2 architecture is most productively understood as a stacking of new capabilities on an irreducible base of classical control. Ch. 2 argues this position in detail; Ch. 9 re-encounters it formally once the three-layer architecture is on the table.

The paradigm shift Chapter 3 previews is, in the end, a re-allocation of where the intelligence lives. The orthodox stack placed the intelligence in the model: model the robot, model the environment, solve the optimization. The modern stack places the intelligence in the distribution: cover a wide enough distribution of environments in simulation, train a policy that adapts from history, and deploy. Both are coherent engineering philosophies. Only the second has so far produced a humanoid that walks reliably outdoors across plazas, sidewalks, tracks, and grass without falls over a week of full-day testing [5]. That comparison is what the rest of this book unpacks.

References

- Kajita, S., Kanehiro, F., Kaneko, K., Fujiwara, K., Harada, K., Yokoi, K., & Hirukawa, H. (2003). Biped walking pattern generation by using preview control of zero-moment point. Proc. IEEE ICRA. doi:10.1109/ROBOT.2003.1241826.

- Pratt, J., Carff, J., Drakunov, S., & Goswami, A. (2006). Capture point: A step toward humanoid push recovery. Proc. IEEE-RAS Humanoids. doi:10.1109/ICHR.2006.321385.

- Pratt, J., Koolen, T., de Boer, T., Rebula, J., Cotton, S., Carff, J., Johnson, M., & Neuhaus, P. (2012). Capturability-based analysis and control of legged locomotion, Part 2: Application to M2V2, a lower-body humanoid. International Journal of Robotics Research.

- Feng, S., Whitman, E., Xinjilefu, X., & Atkeson, C. G. (2014). Optimization-based full body control for the DARPA Robotics Challenge. Journal of Field Robotics. doi:10.1002/rob.21559.

- Radosavovic, I., Xiao, T., Zhang, B., Darrell, T., Malik, J., & Sreenath, K. (2024). Real-world humanoid locomotion with reinforcement learning. Science Robotics. doi:10.1126/scirobotics.adi9579. arXiv:2303.03381.