Chapter 6: The Learning Algorithm Canon

6.1 Five papers that had to arrive in order

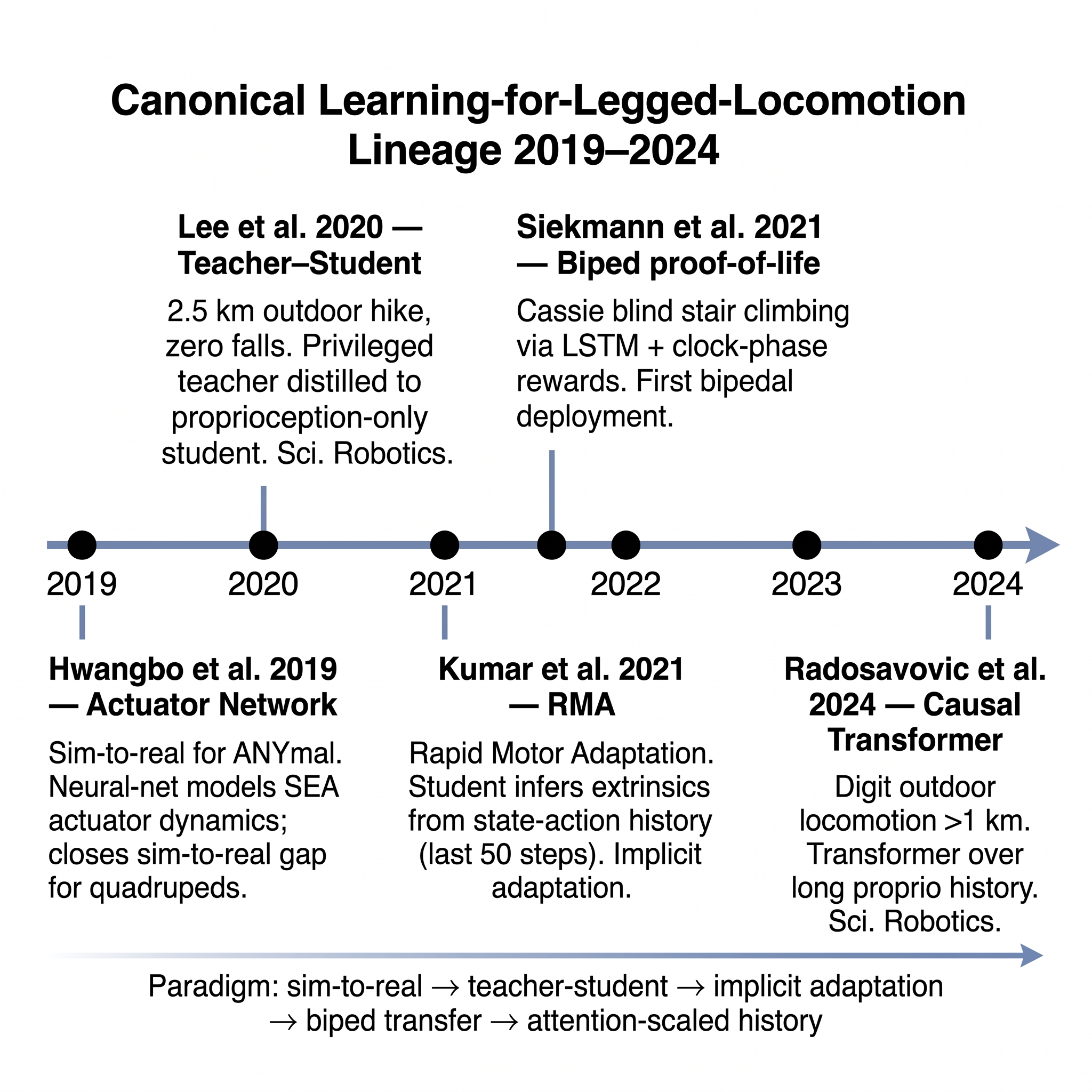

Five papers, published between 2019 and 2024, define the reinforcement-learning (RL) canon that turned GPU-parallel simulation (Chapter 5) and QDD hardware (Chapter 4) into a deployable humanoid locomotion stack. Each paper added a piece the previous one had left open. Their ordering is what matters.

- Hwangbo et al., 2019 — actuator network closes the motor-model gap.

- Lee et al., 2020 — teacher-student privileged information bridges simulation truth to proprioceptive deployment.

- Kumar et al., 2021 (RMA) — adaptation becomes implicit, inferred from state-action history rather than estimated explicitly.

- Siekmann et al., 2021 — the recipe graduates from quadruped to biped.

- Radosavovic et al., 2024 — the recipe graduates to full-size humanoid with a causal Transformer.

Each of these five is a system demonstration as well as an algorithmic contribution — the kind of paper critical-analyst's novelty matrix labels B (breakthrough system) rather than T (theoretical) or S (survey). The reason for that classification is precisely why the five-paper sequence matters: each demonstration proved on real hardware that the next-layer assumption could be relaxed. Without Hwangbo 2019, Lee 2020 would have had a simulator that did not match its real ANYmal. Without Lee 2020, Kumar 2021 would have had no privileged-teacher baseline to distill from. Without Kumar 2021's implicit-adaptation framing, Siekmann 2021 would have needed explicit system identification on Cassie. Without Siekmann 2021's biped proof-of-life, the humanoid community would not yet have believed Radosavovic 2024 was possible.

The chapter proceeds through these five papers (§§6.2–6.6), then audits three ancillary developments that the canon implies but does not itself deliver: the history-encoder architectural evolution from TCN to LSTM to Transformer (§6.7), the RL algorithm family of PPO and its off-policy return via FastTD3 (§6.8), and the motion-prior substrate (DeepMimic, AMASS, LAFAN1, OmniRetarget, PHC) that reference-based RL sits on top of (§6.9). Section 6.10 surveys the whole-body loco-manipulation extensions — HumanPlus, H2O / OmniH2O, TWIST, Expressive Whole-Body Control, HOVER — that pushed the canon from locomotion into bimanual manipulation with the upper body. The chapter closes with the Part II verdict (§6.11): teacher-student with history encoders is partially solved, with specific open frontiers around context-length tradeoffs, multi-skill unification, and language conditioning.

6.2 Hwangbo 2019 — the actuator network

Hwangbo et al.'s 2019 Science Robotics paper [3] is the canonical "first rigorous demonstration" of end-to-end learned quadruped locomotion that transfers zero-shot to hardware. Its algorithmic contribution is the actuator network: a neural network trained on real ANYmal motor data — joint state history mapped to joint torque — that replaces the idealized actuator model inside the simulator. The simulator's idealization (a desired joint position produces the torque the PD control law would produce) is wrong for real motors; the actuator network learns the real mapping from recorded hardware data and injects that learned dynamics into simulation.

The mechanism is a form of grey-box system identification. Rather than estimate motor parameters analytically (stiffness, damping, backlash, temperature-dependent friction) and plug them into a first-principles model, Hwangbo and colleagues train a small MLP on recorded triples of (joint state, commanded torque, actual torque output). The MLP becomes the actuator model the simulator uses for physics. Policies trained against this simulator — rather than against the idealized baseline — transfer to hardware because the simulator's dynamics contains the actuator's real behavior.

The results quantify the change. The learned policy achieves 1.6 m/s forward speed — approximately 2× the prior hand-tuned controller on the same robot [3]. The cost of transport is reduced by 25%. The policy recovers from 80 out of 100 initial fall poses. These are hardware numbers, not simulation numbers; they establish that the sim-to-real gap, previously presumed unclosable without per-scenario manual tuning, can be closed by a well-characterized actuator model learned from data.

Hwangbo 2019's contribution is three-fold. First, it is a system demonstration — a complete policy trained in simulation, deployed on hardware, outperforming the best prior hand-engineered controller. Second, it is an algorithmic contribution — the actuator network architecture and training recipe. Third, it is a community claim — that the sim-to-real program is credible, and that the next generation of work should assume success rather than treat it as an open research question. All three claims landed.

6.3 Lee 2020 — teacher-student with privileged information

Hwangbo 2019's policy was trained from scratch against the actuator-network-augmented simulator. Lee, Hwangbo, Wellhausen, Koltun, and Hutter's 2020 Science Robotics paper [6] introduced the teacher-student paradigm that became the community default. The teacher is trained with privileged information — full terrain height-map, contact force ground truth, environment-parameter ground truth — that the deployed robot cannot directly access. The student is a proprioception-only policy that receives only the sensor readings actually available on hardware. The student is trained not from RL rewards directly but via behavioral cloning plus DAgger-style online distillation from the teacher.

Why this works is instructive. The teacher has the easier RL problem — it sees what its environment is, so its policy gradient has less variance. The student has the harder inference problem — it must compensate for what it cannot see. But the student does not need to solve the RL problem; it only needs to imitate the teacher's actions given its own (impoverished) observations. The student's hardness is pushed from RL-optimization into supervised-learning-from-demonstration, which is statistically cheaper.

The results are the paper's second signal contribution. The deployed student policy achieves 100% success over a 2.5-km outdoor hike across moss, mud, and snow [6]. The hike is not a demo reel; it is a multi-hour autonomous deployment with zero falls. Average forward speed is 1.0 m/s. The paper is the first rigorous demonstration that a policy trained entirely in simulation, distilled through teacher-student from privileged information, can deploy on hardware across a statistically meaningful real-world distribution.

The impact on the field was immediate. Teacher-student with privileged information is now the default structure for nearly every humanoid RL paper published 2022 onward. Chapter 6's Sections 6.4–6.6 all inherit this structure; Chapter 10's VLA chapter inherits it at the higher-level VLA-student-teacher scale.

6.4 Kumar 2021 — RMA and implicit adaptation

Lee 2020's teacher saw the environment; its student had to compensate blind. A natural question follows: could the student learn to infer the environment — not from ground truth, but from its own state-action history? Kumar, Fu, Pathak, and Malik's Rapid Motor Adaptation (RMA) paper [9] at RSS 2021 answered yes.

RMA is a two-phase training procedure. Phase 1 trains a base policy that receives, as privileged input, an extrinsics vector encoding environment parameters (mass, friction, motor strength). Phase 2 trains an adaptation module that regresses the extrinsics vector from the robot's recent state-action history — typically the last 50 timesteps. At deployment, only the adaptation module runs on the robot; it infers the extrinsics from the history, and the base policy conditions on the inferred extrinsics to produce joint commands.

The contribution is the implicit system identification. Kumar et al. showed that the adaptation module could infer environment parameters with a surprisingly small network (0.9M parameters) and adapt to large parameter changes on sub-second timescales — recovery from a 100-kg payload swap in under 1 second [9]. Across a suite of challenging terrains, RMA achieved 70/80 traverse success — a substantial improvement over non-adapting baselines.

The conceptual shift RMA introduced — context as a learned latent rather than an estimated parameter vector — set up the Transformer-based history encoders of Section 6.6 and 6.7. Within a year, the field's default history architecture shifted from "estimate the extrinsics explicitly" to "feed the history into an encoder and let the encoder's representation do the adaptation." The RMA paper is the moment this shift became credible.

6.5 Siekmann 2021 — biped proof-of-life

The 2019–2021 results were all on quadrupeds. Translation to bipedal humanoids was not automatic: bipeds are structurally less stable, have fewer support contacts at any moment, and operate closer to the boundary of LIPM's validity region (Chapter 1). Siekmann, Godse, Fern, and Hurst's 2021 RSS paper [10] provided the biped proof-of-life. Deploying on the Cassie platform (Chapter 4's SEA counter-lineage), the paper demonstrates fully-learned blind bipedal stair traversal — zero-shot sim-to-real on a commercial humanoid.

The architectural choices are instructive. The policy is an LSTM over proprioceptive history, trained in MuJoCo with domain randomization over terrain slope, stair dimensions, mass, and actuator gains. Rewards encode periodic gait via clock-phase design — the HZD-derived reward shaping discussed in Chapter 2. The policy achieves 4 out of 4 successful blind stair climbs at 0.5 m/s forward speed, with no falls across approximately 30 trials [10]. Siekmann's 2020 predecessor paper [7] had demonstrated that LSTM history encoders outperform feedforward MLPs by 2–3× on gait disturbance rejection on the same platform; the 2021 paper is the deployed consequence.

The biped proof-of-life mattered because it licensed the field to treat humanoid RL as not merely extrapolated from quadruped results but separately demonstrated. The community pivoted from "RL works on legged robots" to "RL works on legged robots including bipeds" within a year. Dao, Duan, Apgar, and Hurst's 2022 ICRA paper [11] extended the clock-phase reward composition to cover five common bipedal gaits from a single policy family, demonstrating that clock-phase design generalizes across gait types on Cassie with zero-shot sim-to-real.

6.6 Radosavovic 2024 — causal Transformer on full-size humanoid

Radosavovic, Xiao, Zhang, Darrell, Malik, and Sreenath's 2024 Science Robotics paper [13] is the canon's closing bracket. It demonstrates fully-learned outdoor locomotion on a full-size humanoid — the Agility Robotics Digit — with a policy whose architecture marks the definitive shift from LSTM to Transformer.

The policy is a causal Transformer over a long window of proprioceptive observations and past actions. Trained in Isaac Gym with large-scale domain randomization, it converges in approximately 4 hours on 8 GPUs [13]. The deployed policy walks continuously over 1 km per session outdoors without falls, and recovers from pushes up to 80 N. A key empirical finding of the paper: increasing the context length that the Transformer attends to monotonically improves deployment robustness up to the tested limit. This observation — that more history helps the student adapt — is the 2024 version of Kumar 2021's implicit adaptation claim, and it sets up the Chapter 9 discussion of the System 1 architecture.

Radosavovic 2024's follow-up work, Humanoid Locomotion as Next Token Prediction [13], reframes the control problem as autoregressive next-token prediction over a multiplexed stream of proprioception, velocity commands, and joint actions. Trained on a mixed corpus of simulated rollouts, model-based trajectories, and retargeted human video, the policy trains on 27 billion tokens and runs at 50 Hz onboard. The paper reports that adding human-video tokens improves push recovery by approximately 25% over the RL-only baseline — an early signal that the scaling recipe familiar from language modeling transfers to humanoid control when the tokenization and data sources are chosen appropriately.

The conceptual arc from Hwangbo 2019 to Radosavovic 2024 is: first close the sim-to-real gap with an actuator model → then train the student to compensate what it cannot see → then let the student infer context from its own history → then let the student's architecture scale via attention over long contexts. The five papers are independently valuable; the sequence is what established the paradigm.

6.7 History encoders — TCN, LSTM, Transformer

Running alongside the five-paper canon is an architectural evolution in how the RL policy encodes its recent observation-action history. The observation itself — base linear and angular velocity, gravity vector in the base frame, joint positions and velocities, recent actions, commanded velocity — provides instantaneous state. The history provides context for implicit adaptation [9] and disturbance recovery [10].

Temporal Convolutional Networks (TCN) were the first widely adopted choice, appearing in Lee 2020 and Kumar 2021. TCNs are cheap to train, have bounded receptive fields, and are numerically stable. Their drawback is that the receptive field must be chosen a priori, and extending it requires retraining.

Long Short-Term Memory (LSTM) networks became the community default after Siekmann 2020 demonstrated the 2–3× gait-disturbance robustness gain. LSTMs carry recurrent state across timesteps; their receptive field is effectively unbounded, at the cost of non-parallelizable inference and a more complex training dynamic. Siekmann 2021, HOVER [19], and many humanoid platforms' production policies use LSTM-based policies.

Causal Transformers entered the field with Radosavovic 2024. The Transformer attends over the full recent history in parallel; inference is more memory-hungry than LSTM but can be cached efficiently at deployment. The Radosavovic papers' central empirical finding — that longer attention windows monotonically improve robustness — drove the 2024–2026 shift to Transformer-based policies in frontier company stacks.

The sequence TCN → LSTM → Transformer recapitulates the sequence in language modeling (TCN / WaveNet → LSTM / seq2seq → Transformer / GPT) with a lag of roughly five years. The robotics field's lag is not a failure of imagination; it reflects the fact that Transformer-quality humanoid data — the number and length of recorded trajectories — did not exist until GPU-parallel simulation (Chapter 5) generated enough of it to train on.

6.8 PPO, TD3, and the return of off-policy

The RL algorithm that trains the canonical policies is, with rare exceptions, Proximal Policy Optimization (PPO) [26]. PPO is on-policy, simple to implement, and numerically stable under the large batch sizes that GPU-parallel simulation enables. It is the default training algorithm for Isaac Gym / legged_gym / Humanoid-Gym and for every paper in the five-paper canon.

What is occasionally under-appreciated is that off-policy algorithms — SAC, TD3, and their descendants — continued to develop quietly and have begun to return to the humanoid-RL mainstream. Fujimoto, van Hoof, and Meger's TD3 (Twin Delayed DDPG) [2] addressed the Q-value overestimation problem that made DDPG unreliable, via clipped double-Q learning, delayed policy updates, and target-policy smoothing. TD3 was the off-policy workhorse for continuous control between 2018 and the PPO takeover in 2021.

Seo, Lee, and colleagues' FastTD3 [22] reintroduces off-policy RL to the humanoid-control frontier. FastTD3 combines large-scale parallel simulation with large-batch updates, a distributional critic, and tuned hyperparameters. On the HumanoidBench locomotion–manipulation suite [27], FastTD3 solves tasks in under 3 hours on a single A100, outperforming PPO on rough-terrain domain-randomization tasks by 2–5× in wall-clock time [22]. The significance is less that any one algorithm has won than that the field can now afford algorithmic choice — off-policy is a viable production option for tasks where sample efficiency matters more than simplicity.

The return of off-policy is part of a broader signal: the GPU-parallel-simulation catalyst (Chapter 5) has matured enough that algorithm-level engineering matters again. When sample efficiency was bounded by CPU simulation, all algorithms were equally unaffordable; PPO's simplicity was its competitive advantage. With million-steps-per-second throughput, the algorithmic fine-grained differences reappear.

6.9 Motion priors — DeepMimic, AMASS, LAFAN1, OmniRetarget, PHC

Much of what makes humanoid RL work for expressive tasks — not pure walking but whole-body motion that looks like human motion — is the motion prior: a reference trajectory the policy is rewarded for tracking. The motion-prior lineage traces to Peng, Abbeel, Levine, and van de Panne's DeepMimic [1], which tracks mocap clips with an RL policy whose reward is a weighted sum of pose, root, velocity, and contact-force terms, driven by a phase variable. The paper produced a single policy covering 25 highly dynamic skills — backflip, cartwheel, running — at over 80% success rate, and established the template for reference-motion RL that every subsequent whole-body humanoid RL paper builds on.

DeepMimic's requirement is a reference motion. The two dominant sources:

AMASS [5] is the de facto reference-motion archive: 15+ optical mocap datasets aggregated into a unified SMPL-H-parameterized corpus, totaling 40+ hours across 300+ subjects and 11,000+ motions. AMASS is the training corpus for DeepMimic derivatives, for HumanPlus [15], for HOVER [19], for GR00T N1's motion component (Chapter 9), and for almost every humanoid motion-tracking RL published 2020 onward.

LAFAN1 [8] is Ubisoft La Forge's smaller-but-higher-per-clip-quality mocap release: 77 minutes across 5 actors. LAFAN1 is used alongside AMASS as a supplementary data source for higher-quality character motion.

The challenge with using AMASS and LAFAN1 directly is retargeting: human motion must be mapped to robot kinematics, which almost always mismatches. Naive retargeting produces foot skating, penetration, and infeasible contacts. The 2024–2025 contribution to solving this is OmniRetarget [24], which builds an interaction mesh between agent, terrain, and manipulated objects, minimizes Laplacian deformation between human and robot meshes, and enforces contact and kinematic constraints. OmniRetarget produces 8+ hours of physically feasible retargeted trajectories — zero foot-skating and zero penetration versus approximately 18% in prior baselines — and supports long-horizon scenarios such as 30-second humanoid parkour with a 5-term reward.

A related research direction: learned motion representations that abstract away retargeting entirely. Luo and colleagues' PHC (Perpetual Humanoid Controller) and the follow-up PULSE [20] train a VAE-like encoder-decoder on AMASS where the decoder is itself a motion-tracking policy. The resulting motion latent space becomes an action space for downstream RL, improving sample efficiency by approximately 10× versus joint-space RL and tracking 99%+ of AMASS with mean per-joint position error of roughly 25 mm.

The motion-prior stack — DeepMimic's reward template + AMASS's data archive + OmniRetarget's feasibility layer + PHC's learned representation — is what lifts humanoid RL from bare walking to expressive whole-body control, and it is the substrate on which Section 6.10's whole-body extensions sit.

6.10 Whole-body loco-manipulation extensions

The five-paper canon (Sections 6.2–6.6) solved locomotion. The 2023–2025 extensions pushed the recipe into whole-body motion, coupling locomotion with bimanual manipulation. Six systems define the extension space.

Expressive Whole-Body Control (ExBody) [14] introduced a decoupling: the upper body tracks mocap (CMU Mocap + AMASS subset retargeted to Unitree H1) while the lower body runs its own rough-terrain policy. Teacher-student distillation links the two. ExBody demonstrates Unitree H1 dancing while walking on 5-cm random bumps, with upper-body joint tracking MAE under 0.15 rad [14]. The decoupling pattern — locomotion and upper-body expression trained separately, composed at deployment — recurs in several downstream systems.

HumanPlus [15] builds an end-to-end pipeline from a single RGB video of a human to an imitating policy on Unitree H1. Off-the-shelf human-pose estimation produces SMPL-X poses; a retargeting layer maps them to H1; a shadow policy reward trains the humanoid in Isaac Gym to track the retargeted reference. With a 40-demonstration fine-tune, HumanPlus reaches 60–85% success across seven whole-body tasks (boxing, typing, cloth folding, ball tossing, etc.). The contribution is the accessibility — no mocap suite, just a phone camera.

H2O and OmniH2O [19] push real-time teleoperation of full-size humanoids. H2O uses a single RGB camera observing the operator and a real-time retargeting + imitation-sanitizer pipeline to map the operator's whole-body motion onto Unitree H1 at approximately 50 ms latency. OmniH2O extends H2O to a Unitree H1 augmented with 5-finger Inspire Robots hands and a universal retargeting module that generalizes across operators; autonomous policies trained on 6 hours of teleoperation data reach 60–90% success on the trained tasks.

TWIST (Teleoperated Whole-body Imitation System) [23] combines VR-style teleoperation with a causal-Transformer policy distilled from mocap and teleop data; deployed on Unitree G1, it produces 25+ whole-body skills (cartwheels, jumps, dance, bimanual manipulation) from a single policy and reports push recovery up to 120 N lateral.

HOVER [19] is the 2024 unified whole-body controller: a 1.5-million-parameter policy that supports 15+ distinct control modes (joint PD, torque, inverse kinematics, footstep command, root velocity, etc.) via distillation from an oracle AMASS-trained motion-imitation teacher. HOVER runs at 200 Hz on Jetson-class edge GPUs, making it the closest public analog to the learned System 0 / System 1 pattern Chapter 9 develops.

Wang et al.'s Generalist [25] trains per-task expert policies (walking, running, sitting, dancing) in Isaac Lab, then distills them into a single generalist whole-body policy. The generalist stays within 3–5% of each specialist on 8 canonical tasks — a specialist-then-generalist recipe that outperforms joint multi-task RL in their comparison.

Three additional systems round out the whole-body frontier. Humanoid Parkour [18] demonstrates vision-conditioned humanoid parkour on Unitree H1 — gap crossing (0.5 m), platform climbing (0.4 m high), balance-beam traversal (0.3 m wide) — at 80%+ course completion, via a teacher-student pipeline with depth vision. Get-Up Policies [21] train a fall-recovery policy on Unitree G1 that stands up from arbitrary fall poses in under 6 seconds with 80–90% success. FALCON [Zhang et al., 2025] learns force-adaptive humanoid loco-manipulation, combining a whole-body policy with a force-sensing arm for tasks that require compliant contact — important for the Chapter 15 manipulation-data discussion.

The common theme across Section 6.10's systems: the five-paper canon gave the field locomotion, and the 2024–2025 systems are now composing that locomotion with upper-body expression, bimanual manipulation, and vision. The composition is not automatic — each system above navigates a specific coupling choice (decoupled vs unified, teleoperation-distilled vs reward-shaped, mocap-tracking vs shadow-learned) — but the fact of composition is the measurable progress.

Early precedent from manipulation. OpenAI's 2019 Rubik's-cube-with-a-robot-hand paper [4] prefigured the canonical stack at the end-effector scale: domain randomization via automatic domain randomization (ADR), a dexterous hand solving the cube zero-shot from simulation, with the policy using an LSTM history encoder. The paper is often underrated in the canon because it is a hand-manipulation demonstration rather than a locomotion one, but its techniques — ADR, LSTM history encoding, extensive simulation-scale domain randomization — fed directly into the 2019–2021 locomotion RL recipe. The paper is a useful reminder that the recipe's ingredients were on the shelf by 2019, and that the 2020–2024 canon organized them into the standard form.

6.11 Verdict and open questions

Catalyst 3 verdict: partially solved. The canonical recipe — Hwangbo 2019 actuator network → Lee 2020 teacher-student → Kumar 2021 RMA → Siekmann 2021 Cassie → Radosavovic 2024 full-size humanoid causal Transformer — is reproducible and deployable across Cassie, Digit, Unitree G1 and H1, Booster T1, Berkeley Humanoid, and several additional platforms. The history encoder moved TCN → LSTM → Transformer. Whole-body loco-manipulation extensions (Section 6.10) compose the canon with upper-body manipulation. What remains open are three specific frontiers:

- Context-length vs latency trade-off for onboard inference. Radosavovic 2024's finding that longer attention windows monotonically improve robustness [13] runs into a deployment wall at the context length where inference no longer fits the System 1 latency budget. The engineering of long-context on-board Transformers is an open research frontier. HOVER's 200-Hz inference on Jetson-class hardware [19] is the current public ceiling for multi-mode whole-body policies at edge scale.

- Multi-skill unification beyond a single Transformer. Each of the whole-body systems (HumanPlus, H2O, TWIST, HOVER, Generalist) reaches 60–90% success on its target skill set via distillation or reference-motion shaping. None yet produces a single policy that covers both broad-range locomotion and broad-range manipulation with success rates that would be commercially acceptable for deployment. The five-paper canon solved uniform-skill RL; the cross-skill generalization is the next target.

- Language conditioning of the System 0/1 policy without losing 1 kHz real-time guarantees. The VLA chapter (Chapter 10) will address this from the top-down (VLM at System 2, conditioning the System 1 policy). The bottom-up question — how the System 1 policy consumes language tokens without incurring Transformer inference latency that would break real-time guarantees — remains open.

The Chapter 5 verdict (simulation) and Chapter 6 verdict (learning) together imply the Chapter 7 open frontier: the sim-to-real correction layer is where the residual gaps the canon and the simulators cannot close must be absorbed. Chapter 7 takes that up.

6.12 Bridge to Chapter 7

The canonical recipe, the algorithms, the motion priors, and the whole-body extensions all assume that what trains in simulation will deploy on hardware. The sim-to-real correction that closes the residual gap — domain randomization over the right distribution, system identification where the simulator is wrong by more than DR can absorb, residual delta-action correction for the last few percent — is Chapter 7. The three strategies co-exist in the frontier stacks; their composition is the specific engineering that makes the catalysts converge.

References

- Peng, X. B., Abbeel, P., Levine, S., & van de Panne, M. (2018). DeepMimic: Example-guided deep reinforcement learning of physics-based character skills. ACM SIGGRAPH. arXiv:1804.02717.

- Fujimoto, S., van Hoof, H., & Meger, D. (2018). Addressing function approximation error in actor-critic methods. Proc. ICML. arXiv:1802.09477.

- Hwangbo, J., Lee, J., Dosovitskiy, A., Bellicoso, D., Tsounis, V., Koltun, V., & Hutter, M. (2019). Learning agile and dynamic motor skills for legged robots. Science Robotics. arXiv:1901.08652.

- Akkaya, I., Andrychowicz, M., et al. (2019). Solving Rubik's cube with a robot hand. arXiv preprint.

- Mahmood, N., Ghorbani, N., Troje, N. F., Pons-Moll, G., & Black, M. J. (2019). AMASS: Archive of motion capture as surface shapes. Proc. ICCV. arXiv:1904.03278.

- Lee, J., Hwangbo, J., Wellhausen, L., Koltun, V., & Hutter, M. (2020). Learning quadrupedal locomotion over challenging terrain. Science Robotics. arXiv:2010.11251.

- Siekmann, J., Green, K., Warila, J., Fern, A., & Hurst, J. (2020). Learning memory-based control for human-scale bipedal locomotion. Proc. RSS. arXiv:2006.02402.

- Harvey, F. G., Yurick, M., Nowrouzezahrai, D., & Pal, C. (2020). Robust motion in-betweening (LAFAN1 dataset). ACM TOG / SIGGRAPH.

- Kumar, A., Fu, Z., Pathak, D., & Malik, J. (2021). RMA: Rapid motor adaptation for legged robots. Proc. RSS. arXiv:2107.04034.

- Siekmann, J., Godse, Y., Fern, A., & Hurst, J. (2021). Blind bipedal stair traversal via sim-to-real reinforcement learning. Proc. RSS. arXiv:2105.08328.

- Dao, J., Duan, H., Apgar, T., & Hurst, J. (2022). Sim-to-real learning of all common bipedal gaits via periodic reward composition. Proc. IEEE ICRA. arXiv:2011.01387.

- Radosavovic, I., Xiao, T., Zhang, B., Darrell, T., Malik, J., & Sreenath, K. (2024). Real-world humanoid locomotion with reinforcement learning. Science Robotics. arXiv:2303.03381.

- Radosavovic, I., et al. (2024). Humanoid locomotion as next token prediction. NeurIPS. arXiv:2402.19469.

- Cheng, X., et al. (2024). Expressive whole-body control for humanoid robots. Proc. RSS. arXiv:2402.16796.

- Fu, Z., et al. (2024). HumanPlus: Humanoid shadowing and imitation from humans. Proc. CoRL. arXiv:2406.10454.

- He, T., et al. (2024). Learning human-to-humanoid real-time whole-body teleoperation (H2O). Proc. IEEE/RSJ IROS. arXiv:2403.04436.

- He, T., et al. (2024). OmniH2O: Universal and dexterous human-to-humanoid whole-body teleoperation and learning. Proc. CoRL. arXiv:2406.08858.

- Zhuang, Z., et al. (2024). Humanoid parkour learning. Proc. CoRL. arXiv:2406.10759.

- He, T., et al. (2024). HOVER: Versatile neural whole-body controller for humanoid robots. Proc. IEEE ICRA 2025. arXiv:2410.21229.

- Luo, Z., et al. (2024). Universal humanoid motion representations for physics-based control (PHC/PULSE). Proc. ICLR. arXiv:2310.04582.

- He, T., et al. (2025). Learning getting-up policies for real-world humanoid robots. arXiv preprint 2502.12152.

- Seo, H., et al. (2025). FastTD3: Simple, fast, and capable reinforcement learning for humanoid control. arXiv preprint 2505.22642.

- Ze, Y., et al. (2025). TWIST: Teleoperated whole-body imitation system. arXiv preprint 2505.02833.

- Yang, H., et al. (2025). OmniRetarget: Interaction-preserving data generation for humanoid whole-body loco-manipulation and scene interaction. arXiv preprint 2509.26633.

- Wang, X., et al. (2025). From experts to a generalist: Toward general whole-body control for humanoid robots. arXiv preprint 2506.12779.

- Schulman, J., Wolski, F., Dhariwal, P., Radford, A., & Klimov, O. (2017). Proximal policy optimization algorithms. arXiv preprint 1707.06347.

- Sferrazza, C., Huang, D.-M., Lin, X., Lee, Y., & Abbeel, P. (2024). HumanoidBench: Simulated humanoid benchmark for whole-body locomotion and manipulation. Proc. RSS. arXiv:2403.10506.