Chapter 5: GPU Massively Parallel Simulation

5.1 The role of simulation, before and after

Before 2021, simulation in robotics was a pre-deployment verification step: a policy trained on real data (or by hand) was tested in a physics simulator — Gazebo, Mujoco (CPU), PyBullet — before being uploaded to hardware. Simulation was the last check, not the training ground. After 2021, simulation became the training ground itself, and the hardware became the validation step. This inversion is what Chapter 5 audits.

The mechanism of the inversion is specific: physics simulators and neural-network policies now share GPU memory, and observations and rewards remain in CUDA tensors directly consumed by PyTorch or JAX. The CPU–GPU transfer bottleneck that bounded prior simulators is eliminated, and a single workstation can step thousands of parallel environments in real-time-equivalent seconds. This is the sample engine that domain randomization (Chapter 7) and teacher-student RL (Chapter 6) both depend on. Without it, the catalysts named elsewhere in Part II remain theoretically compelling but practically unaffordable.

The chapter proceeds through four claims. First (§5.2), it traces the Isaac Gym inflection point [2] and Rudin et al.'s "learning to walk in minutes" [3] as the 2021 regime change. Second (§5.3), it maps the 2026 simulator landscape — Isaac Lab / Orbit, MuJoCo Playground, Humanoid-Gym, Booster Gym, Genie Sim, Genesis — and explains why the field settled on a small number of standards rather than one winner. Third (§5.4), it connects GPU parallelism to what it actually enables: domain randomization at a statistically meaningful scale, sim-to-sim verification as a bug filter, and the observation of "15 minutes to a deployable policy" as the practical 2025 benchmark. Fourth (§5.5), it surveys the contact-fidelity frontier and the differentiable-simulation research program [9] as the open research direction. The chapter closes with the Part II verdict (§5.6): GPU parallel simulation is solved and standardized for locomotion RL throughput; contact accuracy for manipulation and deformable regimes remains the open frontier.

5.2 Isaac Gym 2021 — the inflection point

NVIDIA's Isaac Gym, introduced at NeurIPS 2021 [2], is the 2021 inflection point. Its technical contribution is architectural rather than algorithmic: PhysX rigid-body physics and PyTorch neural-network inference co-locate on a single GPU; environment observations and rewards are CUDA tensors directly consumed by the policy without leaving GPU memory. The CPU–GPU transfer latency that bottlenecked prior simulators — even GPU-accelerated ones whose physics ran on GPU but whose learning loop ran on CPU — is simply eliminated.

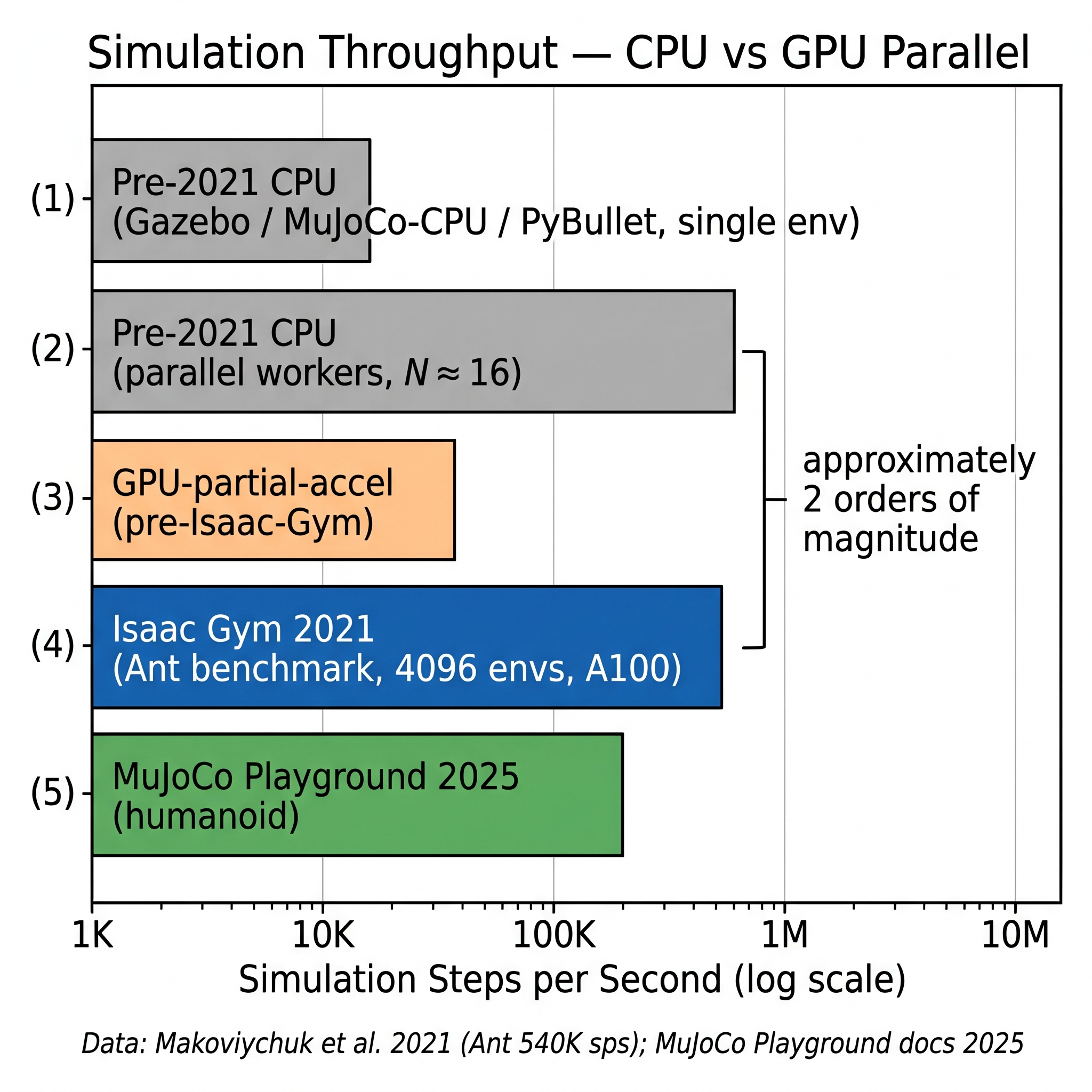

The throughput numbers establish the regime change. Isaac Gym reports approximately 540,000 simulation steps per second on the Ant benchmark with 4,096 parallel environments on a single NVIDIA A100 [2] — 2–3 orders of magnitude faster than prior simulators on equivalent hardware. On the ANYmal locomotion task, a policy converges in under two minutes of wall-clock training on a single GPU. On the Shadow Hand cube-reorientation task, a policy trains to a useful level in approximately 35 minutes on a single GPU — a task that on pre-2021 CPU-based stacks would have required days or been abandoned entirely.

Rudin, Hoeller, Reist, and Hutter's CoRL 2021 paper [3] is the companion that turned Isaac Gym's architectural capacity into an RL recipe. Using Isaac Gym, the paper trains ANYmal locomotion on 4,096 parallel robot instances on a single workstation, introduces a terrain curriculum (flat, slopes, stairs, gaps) that progresses difficulty with training, and uses a PPO-based policy with a specific observation and reward decomposition that became the community default. The results: flat-ground walking learned in approximately 4 minutes of wall-clock; rough-terrain policy in approximately 20 minutes on a single GPU. Prior CPU baselines for the same tasks required several days. The paper also releases legged_gym, the task suite that became the de facto reference stack for quadruped and eventually humanoid RL over the following three years.

The two papers together are the 2021 inflection. Subsequent work is not about whether GPU parallel simulation is possible, but about which simulator to use, which task suite, and which specific variant of the Isaac Gym / legged_gym recipe to reproduce. The 2019 Hwangbo et al. actuator-network paper (Chapter 6) was trained on CPU-based stacks with many hours per iteration; the equivalent work post-2021 runs in minutes. The catalyst's effect is measurable in research cycle time, and from there in research output.

[2]; MuJoCo Playground 2025 humanoid ≈ 2×10⁵. The ~2-orders-of-magnitude gap between bars (2) and (4) is the 2021 regime change. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch05_makoviychuk2021_fig3.png'" style="cursor:zoom-in">

[2]; MuJoCo Playground 2025 humanoid ≈ 2×10⁵. The ~2-orders-of-magnitude gap between bars (2) and (4) is the 2021 regime change. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch05_makoviychuk2021_fig3.png'" style="cursor:zoom-in">

5.3 The 2026 simulator landscape

By 2026 the GPU-parallel-simulation landscape has stabilized into a small number of production stacks with well-understood tradeoffs. Six are worth naming in detail.

Isaac Lab (NVIDIA, formerly Orbit). Isaac Lab — the successor to Orbit [1] — is NVIDIA's production-grade RL platform built on Isaac Sim, which in turn is built on the PhysX rigid-body engine. Orbit introduced the environment manager, reward composer, and cross-simulator asset pipeline that Isaac Lab formalized into a toolchain; 20+ tasks across manipulation and locomotion are supported, with 1,000+ parallel environments feasible on a single A100 [1]. Isaac Lab is the default choice for production frontier-company stacks; Figure, Agility, and NVIDIA's own GR00T pipeline all build against it. The price of Isaac Lab's polish is dependency on NVIDIA's SDK ecosystem — Isaac Sim, Omniverse, OpenUSD, Isaac Replicator — which academic groups sometimes find heavy.

MuJoCo Playground (Google DeepMind, 2025). Zakka, Tassa, and colleagues' MuJoCo Playground [7] builds on MuJoCo MJX — the JAX port of MuJoCo — to provide a GPU/TPU-accelerated unified framework combining DM Control, legged_gym-style tasks, and manipulation benchmarks. Throughput reaches approximately 200,000 environment steps per second on a single A100 for humanoid RL; TPU training on 4× TPU v3 reaches walking in approximately 10 minutes. Playground supports Unitree G1, H1, Booster T1, Apollo, and others with recipes for PPO and SAC. Its contact accuracy is superior to PhysX in certain regimes — particularly for rigid contacts with small time steps, which matter for manipulation — and its JAX-native API is lighter-weight than Isaac Lab's for researchers comfortable with JAX. MuJoCo Playground is positioned as the MuJoCo-first alternative to Isaac Lab and is the default choice for research groups whose infrastructure aligns with JAX / TPU workflows.

Humanoid-Gym (2024). Gu, Zhang, and colleagues' Humanoid-Gym [4] is an open-source RL framework specialized for humanoids, built on legged_gym and Isaac Gym. It adds humanoid-specific rewards (stance, step period, upper-body regularization) and a sim-to-sim pipeline that validates Isaac Gym policies in MuJoCo before real deployment — the Isaac Gym → MuJoCo → hardware pattern that became the community default for deploying humanoid policies. Humanoid-Gym reports sim-to-sim drift in joint torques under 8%, and ships trained policies for RobotEra XBot-S and XBot-L that walk zero-shot on flat ground. Humanoid-Gym's contribution is less the simulator itself than the recipe — the specific reward terms, network architecture choices, and deployment workflow that humanoid RL practitioners adopt as the default.

Booster Gym (2025). Wang, Chen, and colleagues' Booster Gym [6], from Tsinghua University and Booster Robotics, is the Chinese open-source counterpart covering training-to-deployment for the Booster T1 platform. Booster Gym demonstrates zero-shot sim-to-real omnidirectional walking on T1 and recovery from 50 N lateral pushes. Its role in the ecosystem is to extend the Humanoid-Gym pattern to a Booster-specific embodiment with Booster-specific hardware parameters; each new humanoid manufacturer (Unitree, Fourier, Booster, etc.) has now produced an equivalent per-platform gym repository, and the uniformity of the pattern across platforms is itself the mark of the catalyst's maturity.

Genie Sim 3.0 (AgiBot, 2026). AgiBot's Genie Sim 3.0 [10] is the proprietary production simulator backing the GO-1 and GO-2 policy training pipelines (Chapter 10). Its architectural claim is decoupled physics (1 kHz) and rendering pipelines — the physics solver runs on GPU at 1 kHz while the photorealistic renderer runs in parallel, allowing large-scale RL parallelism and high-fidelity visual observations simultaneously. The paper reports 5–10× more photorealistic frames per second than AgiBot's prior internal stack. Genie Sim is positioned alongside Genie Studio (deployment platform) and the RLinf framework as AgiBot's vertical-integration answer to the Isaac Lab + real-data + deployment pipeline.

Genesis (2024). Genesis [11] is the most ambitious of the 2024–2026 simulator releases. It claims a unified physics engine supporting rigid, soft, fluid, cloth, and hybrid bodies in a single solver, with reported 43 million FPS for a Franka + plane scene on consumer hardware — approximately 430,000× real-time. Genesis also includes a generative data engine that converts natural-language prompts into interactive scenes, task proposals, reward functions, character motions, and physics videos. Its throughput claims have not been fully reproduced by third parties at the time of writing, and its adoption as a production stack is nascent. Genesis is the simulator whose thesis — unified rigid/soft/fluid physics with generative scene specification — the 2026–2028 research community will either validate or retrench from; the chapter notes Genesis here to mark the research bet, not to endorse the throughput claim.

The field's settling on roughly these six simulators — one NVIDIA-centric production stack, one JAX-centric research stack, two humanoid-gym-pattern open-source frameworks, one company-proprietary vertical-integration stack, and one ambitious unified-physics research stack — mirrors what happened in the deep-learning framework ecosystem between 2015 and 2020. Two or three dominant production tools coexist with a long tail of specialized research tools; throughput is similar across the top-tier options; choice is driven by team skills and deployment targets more than by throughput deltas. The commoditization is a feature of the catalyst's maturity, not a sign of research dormancy.

5.4 What the sample engine enables

The measurable consequence of GPU parallel simulation for humanoid RL is not throughput for throughput's sake. It is the practical reachability of statistically meaningful domain randomization, sim-to-sim verification, and rapid iteration. Three concrete illustrations.

Domain randomization at scale. Chapter 7 develops domain randomization (DR) in detail. The short version is that DR covers the sim-to-real gap by training over a distribution of simulated environments wide enough that the real robot is inside that distribution with high probability. DR's sample requirement scales with the dimensionality of the randomized parameters — for locomotion, 10 to 30 parameters (friction, mass, CoM, motor gains, terrain height, latency, noise spectrum, etc.). At 10,000 environment-steps per second on CPU, covering a 30-dimensional random distribution statistically requires weeks. At 1,000,000 environment-steps per second on a single GPU, the same coverage takes hours. The difference between weeks and hours is the difference between DR being a research idea and DR being the production default.

Sim-to-sim as a bug filter. Humanoid-Gym's Isaac-Gym → MuJoCo → hardware pattern [4] has become the community standard deployment recipe. The rationale: a policy trained against Isaac Gym's PhysX dynamics will not transfer to MuJoCo's different contact model unless the policy is robust enough to environment-physics drift that it would also transfer to the real robot. Sim-to-sim verification is cheap because both simulators are GPU-accelerated; it is also remarkably effective — Humanoid-Gym reports joint-torque drift under 8% between Isaac Gym and MuJoCo for well-trained policies, and policies that fail sim-to-sim also fail in hardware. Sim-to-sim is a cheap filter for bugs that would otherwise surface only at deployment.

15 minutes to a deployable policy. Seo and colleagues' 2025 follow-up work [8] reports training a humanoid sim-to-real walking policy in approximately 15 minutes on a single A100 using MuJoCo Playground and a deliberately minimal PPO recipe (standard DR, no teacher-student, minimal reward shaping). The paper's purpose is less a throughput brag than a simplicity demonstration: the GPU-parallel-simulation catalyst has matured to the point where production-quality humanoid locomotion can be trained by a research lab in less time than it takes to fine-tune a large language model. The 15-minute claim is a benchmark for the post-2025 baseline; any new RL recipe that requires overnight training on 2025 hardware is, by construction, not competitive with the stack the field has settled on.

HumanoidBench as a shared evaluation target. Sferrazza et al.'s 2024 RSS paper [5] introduced HumanoidBench: the first open humanoid benchmark, built on MuJoCo, with Unitree H1 + two Shadow Hands and a combined 14 locomotion + 17 whole-body manipulation tasks. The benchmark's observation dimension is 151 and action dimension 61; reference results are reported for PPO, SAC, DreamerV3, and TD-MPC2. HumanoidBench's principal finding, relevant to this chapter, is the asymmetry between locomotion and whole-body manipulation: flat RL solves locomotion reasonably but reaches under 50% success on whole-body manipulation tasks, while hierarchical policies atop walking primitives reach 80%+. Chapter 7's discussion of the sim-to-real verdict and Chapter 15's differentiation-axis argument both reference this asymmetry; HumanoidBench is the evaluation scaffold that makes the asymmetry statistically legible.

5.5 The contact-fidelity frontier and differentiable simulation

The catalyst's success for locomotion is uncontested. Its limits for manipulation and for deformable-contact regimes are the frontier.

Rigid-body contact for manipulation. PhysX and MuJoCo both discretize contact as a linear complementarity problem (LCP) solved at each time step; the simulation's fidelity depends on contact geometry, contact stiffness, friction-cone discretization, and time-step size. For locomotion, where contacts are largely planar (foot on ground) and durations are millisecond-scale, current simulators are accurate to within the margin that DR closes. For manipulation — fingertip on object, small contact area, variable surface material — the contact model's errors compound. HumanoidBench's whole-body manipulation results [5] show that contact-fidelity limitations are the primary reason flat RL underperforms on manipulation, not the RL algorithm itself.

Deformable, fluid, and soft-body regimes. Many real-world manufacturing tasks (cable routing, fabric handling, liquid pouring, foam dispensing) involve non-rigid physics that neither PhysX nor MuJoCo natively handles. Genesis [11] is the 2024 bet that a unified rigid/soft/fluid solver can close this gap; the claim is promising but not yet production-validated. Specialized tools — NVIDIA FleX, Taichi, DeepMind's hybrid DM_Soft — cover subsets of this space with varying fidelity.

Differentiable simulation. A distinct research thread asks: if the simulator is differentiable, can we replace RL's sample-intensive gradient estimation with direct analytical gradients? Schwarke, Klemm, and Tordesillas's 2024 CoRL paper [9] demonstrates the approach on quadruped locomotion, reporting 10–100× fewer simulation steps than PPO baselines at comparable final policy quality. This is a substantial efficiency gain, not a revolution. The current barrier is that differentiable contact is hard — contact discontinuities make gradients unstable — and most large-scale humanoid RL still uses non-differentiable stacks. Differentiable simulation is the strongest candidate for what Chapter 3 referred to as a fifth catalyst that may emerge in the 2026–2030 window. Its success would further compress the training-time budget and perhaps open tasks that sample-based methods currently cannot reach.

5.6 Verdict and open questions

Catalyst 2 verdict: solved (standardized). Isaac Gym [2], the legged_gym recipe [3], Isaac Lab / Orbit [1], MuJoCo MJX and Playground [7], Humanoid-Gym [4], Booster Gym [6], and Genesis [11] collectively reduce training from days to minutes at million-environment-steps-per-second throughput. A single-GPU sim-to-real walking policy trains in 15 minutes [8]. The throughput problem is solved; the standardization has stabilized into roughly six production stacks with well-understood tradeoffs.

What remains open is the contact-accuracy fidelity for manipulation and deformable regimes. HumanoidBench's demonstrated asymmetry between locomotion and whole-body manipulation [5] anchors this claim quantitatively. Three specific open directions:

- Rigid contact fidelity for fingertip manipulation. Contact-patch geometry, normal-tangential coupling, and material-dependent friction models are at the resolution limit of current GPU-accelerated simulators. Research on fine-grained contact models is ongoing; no production solution exists.

- Non-rigid physics. Cable, fabric, soft tissue, fluid, and foam are not adequately simulated by the mainstream stacks. Genesis's unified-solver bet is the most ambitious candidate; specialized tools fill the gap in research settings.

- Differentiable simulation for contact-rich regimes. Schwarke et al. 2024's 10–100× sample-efficiency gain on quadruped locomotion suggests the research direction; generalizing to contact-rich manipulation is the unsolved step.

Chapter 7 develops the sim-to-real correction strategies that handle the residual gaps the current simulators cannot close; Chapter 15 argues that contact-fidelity for Korean manufacturing tasks (semiconductor wafer handling, battery cell stacking, cable routing in automotive assembly) is precisely the cluster of problems that GPU-parallel simulation has not yet commoditized.

5.7 Bridge to Chapter 6

GPU parallel simulation is the sample engine. The question Chapter 6 takes up is: what are we training on these engines? The answer is the teacher-student recipe with history encoders — the specific RL algorithmic canon that turned GPU-parallel-simulation throughput into deployable humanoid locomotion. Hwangbo 2019, Lee 2020, Kumar 2021, Siekmann 2021, and Radosavovic 2024 form the five-paper stack that Chapter 6 audits in detail.

References

- Mittal, M., et al. (2023). Orbit: A unified simulation framework for interactive robot learning environments. IEEE RA-L. doi:10.1109/LRA.2023.3270034. arXiv:2301.04195.

- Makoviychuk, V., et al. (2021). Isaac Gym: High performance GPU-based physics simulation for robot learning. NeurIPS Datasets and Benchmarks. arXiv:2108.10470.

- Rudin, N., Hoeller, D., Reist, P., & Hutter, M. (2021). Learning to walk in minutes using massively parallel deep reinforcement learning. Proc. CoRL. arXiv:2109.11978.

- Gu, X., Zhang, Y., Wu, K., et al. (2024). Humanoid-Gym: Reinforcement learning for humanoid robot with zero-shot sim2real transfer. arXiv preprint 2404.05695.

- Sferrazza, C., Huang, D.-M., Lin, X., Lee, Y., & Abbeel, P. (2024). HumanoidBench: Simulated humanoid benchmark for whole-body locomotion and manipulation. Proc. RSS. arXiv:2403.10506.

- Wang, Y., et al. (2025). Booster Gym: An end-to-end reinforcement learning framework for humanoid robot locomotion. arXiv preprint 2506.15132.

- Zakka, K., et al. (2025). MuJoCo Playground: An open-source framework for GPU-accelerated robot learning and sim-to-real. arXiv preprint 2502.08844.

- Seo, S., et al. (2025). Learning sim-to-real humanoid locomotion in 15 minutes. arXiv preprint 2512.01996.

- Schwarke, C., Klemm, V., & Tordesillas, J. (2024). Learning quadrupedal locomotion via differentiable simulation. Proc. CoRL. arXiv:2403.14864.

- AgiBot. (2026). Genie Sim 3.0: A high-fidelity comprehensive simulation platform for humanoid robot. arXiv preprint 2601.02078.

- Genesis Team. (2024). Genesis: A generative and universal physics engine for robotics and beyond. Open-source release, December 2024.