Chapter 7: Sim-to-Real: Three Strategies

7.1 What sim-to-real actually asks

A policy trained against a simulator encodes the simulator's dynamics into its weights. When that policy is deployed on hardware, the hardware is another dynamical system, and the policy's behavior depends on how closely the two match. The sim-to-real gap is the composite error between those two systems, aggregated across every dimension the policy is sensitive to: dynamics (mass, friction, motor model, contact), observations (sensor noise, latency, calibration), and reward (the reward the policy optimized is not the one the deployment environment actually pays out).

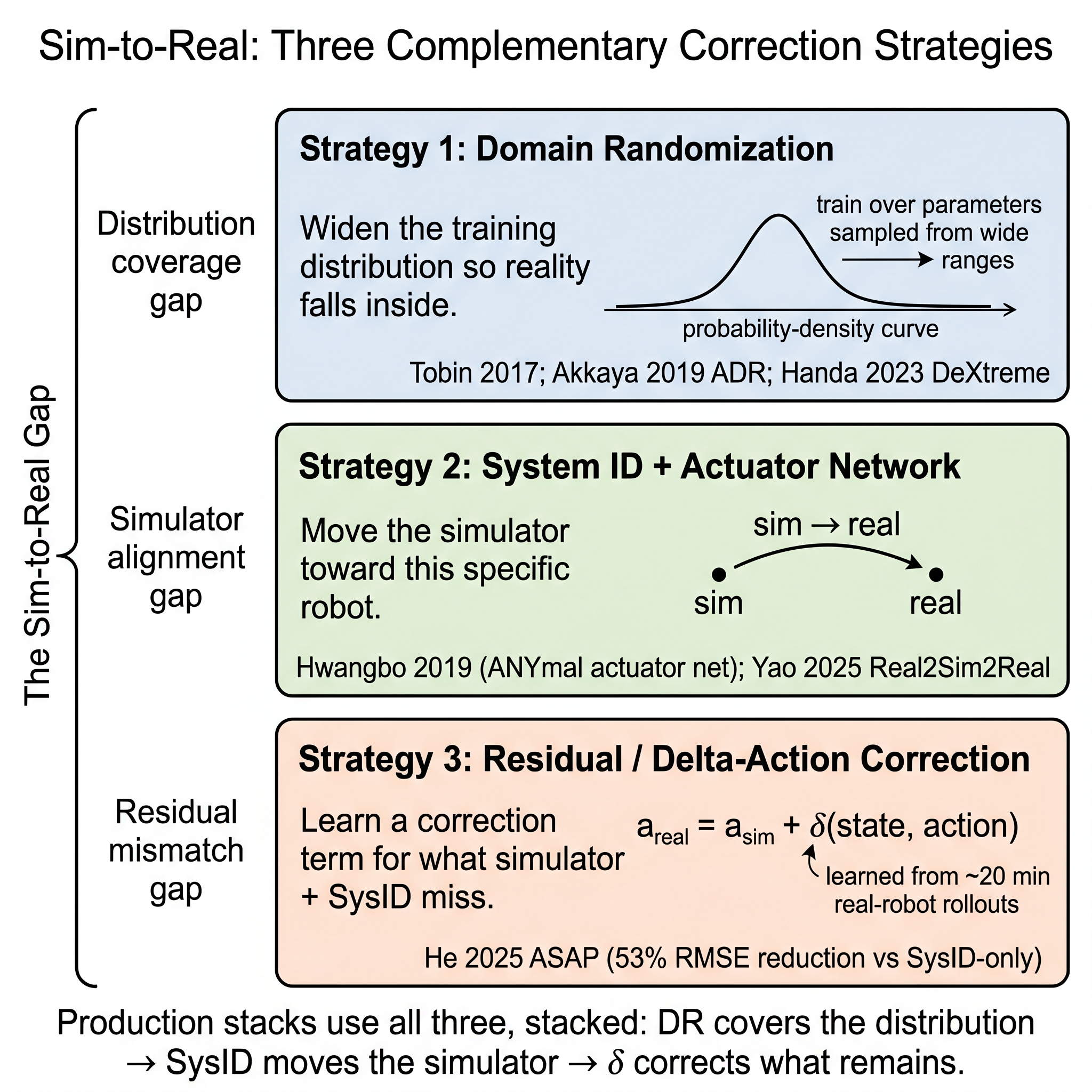

Chapters 4–6 described the ingredients that make sim-to-real possible — QDD hardware that executes torques honestly (Chapter 4), GPU simulation that can cover a distribution at scale (Chapter 5), and the teacher-student-plus-history-encoder recipe that lets a student adapt to environment uncertainty from proprioceptive history (Chapter 6). Chapter 7 is about the correction layer — the specific strategies that absorb the residual gap the chapters-above ingredients leave. Three strategies dominate 2026 practice:

- Domain randomization (DR) — train over a wide distribution of simulated environments, so the real robot is (with high probability) inside that distribution. Coverage as a substitute for correctness.

- System identification + actuator networks — measure the real robot's dynamics, then move the simulator toward reality.

- Residual / delta-action correction — keep the simulator as a first pass, and train a small real-data model that corrects what remains.

Each strategy is intellectually defensible on its own. In practice they stack: production-frontier humanoid stacks use all three at once, with different strategies handling different regions of the residual gap. The chapter proceeds through the three strategies (§§7.2–7.4), then covers the observation-space and safety complements that the three-strategy taxonomy does not itself cover (§7.5–7.6), and closes with the Part II verdict (§7.7): sim-to-real correction is partially solved — bounded-contact locomotion is closed, contact-rich manipulation remains the open frontier.

7.2 Strategy 1 — Domain randomization

Domain randomization (DR) is the oldest of the three strategies and the dominant default. The canonical formulation is Tobin et al.'s 2017 IROS paper [1]: train on a wide variance of synthetic textures, lighting, and object pose so that the real-world appearance becomes "just another variation" seen during training. Tobin's original deployment was object localization — detection transferring zero-shot with under 1.5 cm error on real objects, with no real data used in training [1]. The principle generalizes far beyond vision.

For humanoid locomotion, DR operates over a 10–30-parameter distribution of dynamics-relevant quantities: joint friction, link mass, center-of-mass offset, motor gain, terrain height, terrain friction, control latency, observation noise spectrum, external disturbance magnitude, and similar. The policy is trained to succeed across the full distribution, which implicitly forces it to learn a robustness-oriented representation of environment context. Chapter 6's teacher-student recipe is the complementary machinery — the teacher sees the ground-truth parameters, the student infers them from proprioceptive history [6] — but DR is what creates the distribution the student learns to infer from.

Two developments advanced DR's maturity. Automatic Domain Randomization (ADR) [4], from OpenAI's Rubik's-cube-with-a-robot-hand work, replaces the fixed randomization ranges with an autonomous curriculum: the system expands the distribution's ranges until the policy's success degrades, then holds the expanded range until the policy recovers. The process is a Bayesian-optimization-style exploration of the randomization space rather than a manual sweep. The paper's demonstration — a Shadow Hand solving a Rubik's Cube one-handed at up to 15 consecutive face rotations before physical failure dominates [4] — was the first published high-stakes sim-to-real dexterous manipulation result.

The second advance is the GPU-parallel-simulation scale that made DR statistically meaningful. NVIDIA's DeXtreme [8] demonstrates Allegro-hand sim-to-real cube reorientation on Isaac Gym with 3–4k parallel environments on a single GPU, achieving 73% zero-shot success. Before GPU-parallel simulation, DR's distribution was a research promise; at million-environment-step-per-second throughput (Chapter 5), DR became the production default. Humanoid-Gym [10], Booster Gym [13], and the MuJoCo Playground fast-walking pipeline [20] all inherit this maturity.

DR's limitation is structural: it covers the distribution the simulator can sample. Parameters that are not parametric (object geometry, grasp topology, contact-patch micro-structure, arbitrary material viscoelasticity) are not samplable from a prior distribution. DR is highly effective for locomotion, where the dynamics-relevant parameters are low-dimensional and well-understood. DR is limited for contact-rich manipulation, where the dimensions that matter are not Gaussian. This limitation is what Strategy 3 addresses.

7.3 Strategy 2 — System identification and actuator networks

The second strategy attacks the problem from the opposite direction: rather than widen the simulator's distribution, move the simulator toward reality. Traditional system identification — measure motor parameters, inertial properties, and frictional coefficients on real hardware, then plug them into first-principles simulator models — is labor-intensive and routinely imperfect, but it remains the first-line approach for high-value parameters.

The 2019 contribution that pushed the strategy forward was Hwangbo et al.'s actuator network [3] (Chapter 6 §6.2). Rather than analytically estimate per-motor stiffness, damping, backlash, and thermally varying friction, Hwangbo and colleagues trained a small MLP on recorded (joint state, commanded torque, actual torque) triples. The MLP is the actuator model the simulator uses; it absorbs whatever non-idealities the first-principles model cannot capture. The learned policy, trained against the actuator-network-augmented simulator, achieves 1.6 m/s on ANYmal with 25% cost-of-transport reduction and recovery from 80 of 100 fall poses [3].

The actuator-network approach works particularly well for the QDD actuators (Chapter 4) because the motor-level dynamics are the residual gap between first-principles models and reality, and because the QDD's backdrivability and motor-current-based torque estimation make the recording data clean and rich. Subsequent work including Booster Gym [13] and Humanoid-Gym [10] inherits and refines the actuator-network pattern.

A 2025 evolution of the strategy is Real2Sim2Real self-supervised system identification [16]. The pipeline collects short real-robot rollouts, updates sim parameters via gradient descent on observed state-action-state transitions, then fine-tunes the policy against the aligned simulator. The contribution is not a new representational idea but an autonomous loop: the simulator aligns itself without manual parameter sweeping, which makes the Strategy-2 approach viable at higher-dimensional parameter spaces where manual sys-ID does not scale. The paper reports performance close to ASAP (Strategy 3) with substantially reduced manual tuning.

Strategy 2's limit is that it moves the simulator toward a particular robot at a particular time. When the robot is deployed in a new environment or when the hardware ages, the simulator-reality alignment drifts. Strategy 2 is an engineering practice that requires ongoing maintenance rather than a one-time fix. For industrial deployment (Chapter 15), this maintenance cost is non-trivial — it is one of the reasons Korean manufacturing's data-collection strength is a strategic asset.

7.4 Strategy 3 — Residual and delta-action correction

The third strategy keeps the simulator as a first pass but explicitly models the residual between simulator and reality, and applies that residual correction at runtime. Tairan He and colleagues' ASAP (Aligning Simulation and Real-World Physics) [14] is the canonical 2025 reference. ASAP is a two-stage real-to-sim-to-real framework:

- Stage 1: pretrain a motion-tracking policy in simulation on retargeted human mocap.

- Stage 2: collect approximately 20 minutes of real-robot rollouts on the pretrained policy; train a delta-action residual model that maps (sim state, sim action) → real-world correction; fine-tune the policy in simulation augmented with the delta-action model.

The residual model is small — it does not have to model the full physics, only the difference between the simulator's prediction and reality's observation. ASAP demonstrates substantial gains on agile humanoid skills: joint-tracking RMSE reduced by 53% vs system-identification-only baselines and 38% vs DR-only baselines on agile sequences [14]. The demonstration includes a G1 Kung-fu kick and a spin — skills that neither SysID alone nor DR alone produced at comparable quality.

The conceptual claim ASAP cashes is that the residual is the right object to model. First-principles simulation handles the easy part of the dynamics. DR covers the "dimension of uncertainty" part. Delta-action correction captures what neither can — the idiosyncratic mismatch between a specific robot's specific dynamics and any generic simulator. The three strategies are complementary because they address different layers of the sim-to-real gap: DR covers the distribution, SysID moves the simulator, delta-action corrects the residual.

Yao and colleagues' Real2Sim2Real follow-up [16] reframes ASAP's delta-action correction as a special case of a self-supervised sim-alignment loop. The paper argues that given enough real-world data, Strategy 2 (SysID) and Strategy 3 (delta-action) converge — a delta-action model for a well-aligned simulator becomes the identity, and a well-aligned simulator makes the delta-action model small. This convergence is the 2026 research frontier's shared assumption; the practical question is how much real data is enough.

[1]; [4] ADR; [8] DeXtreme); (2) System ID + Actuator Network moves the simulator toward the specific robot ([3]; [16] Real2Sim2Real); (3) Residual / Delta-Action correction learns a correction term for what simulator + SysID miss ([14] ASAP, 53 % RMSE reduction vs SysID-only on agile humanoid skills). Production stacks use all three, stacked. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch07_three_strategies.png'" style="cursor:zoom-in">

[1]; [4] ADR; [8] DeXtreme); (2) System ID + Actuator Network moves the simulator toward the specific robot ([3]; [16] Real2Sim2Real); (3) Residual / Delta-Action correction learns a correction term for what simulator + SysID miss ([14] ASAP, 53 % RMSE reduction vs SysID-only on agile humanoid skills). Production stacks use all three, stacked. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch07_three_strategies.png'" style="cursor:zoom-in">

7.5 Observation-space sim-to-real

The three-strategy taxonomy organizes dynamics sim-to-real. Observation-space sim-to-real — vision, depth, tactile, IMU — is a parallel problem with its own strategies. For humanoid locomotion through 2024, most work used proprioception-only policies, which sidesteps the observation-space gap entirely (Chapter 6's canon is proprioceptive). As the field moves to vision-conditioned locomotion (Humanoid Parkour [19]) and vision-language-action models (Chapter 10), observation-space sim-to-real becomes the frontier.

Three approaches are in active use. Render-randomization is the DR-style approach: randomize textures, lighting, materials, and camera intrinsics in simulation so the real-world rendering is within the training distribution. Tobin et al. 2017 [1] is the foundational paper; modern pipelines use physics-based rendering (Isaac Lab's RTX integration, MuJoCo Playground's render pipeline) at training-scale throughput.

Depth-only policies is the restriction approach. Depth images are much more stable across domain-shift than RGB — a depth sensor returns roughly the same distances indoors, outdoors, under different lighting, with rain, with reflective surfaces. Humanoid Parkour [19] uses depth vision for teacher-student distillation specifically to keep the observation-space sim-to-real gap manageable. The price is the information loss; depth alone cannot see color or texture.

World-model / denoising approaches train a learned dynamics model alongside the policy. Gu et al.'s Denoising World Model Learning (DWML) [12] combines a learned dynamics model with a denoising objective to improve humanoid locomotion robustness on challenging terrains (RobotEra XBot walking on slippery surfaces and stair-like terrain, +25% traversal success over model-free PPO baselines). DWML's framing — train a world model that is robust to observation noise, then plan or learn a policy within it — is the emerging 2025 research direction that complements the three-strategy taxonomy at the observation-space side.

7.6 Deployment engineering — latency, safety filters, fallback controllers

Sim-to-real's practical success depends on a third axis the taxonomy does not name: deployment engineering. A sim-to-real-capable policy that cannot meet real-time constraints, cannot arbitrate with a safety layer, and cannot fall back when inference fails is not deployable. Three patterns define 2026 practice.

Latency budgets. The System 0/1/2 architecture (Chapter 9) commits to specific frequency tiers: 1 kHz System 0, 100–200 Hz System 1, 7–10 Hz System 2. Each tier's inference must fit its budget including observation preprocessing, network forward pass, post-processing, and command dispatch. Transformer history encoders (Chapter 6) stress the System 1 budget because their inference cost scales with context length. Radosavovic 2024's finding that longer attention improves robustness [11] runs into this wall; engineering the longest affordable context is an explicit optimization in frontier stacks.

Safety filters. The QP-based safety filter pattern (Chapter 2 §2.3, Chapter 4) operates beneath the learned policy. Agility Robotics's Motor Cortex — described as "always-on safety layer" [18] — is the commercial instance. He et al.'s Agile But Safe (ABS) [9] provides the corresponding research pattern: a dual-policy framework where a fast "agile" policy handles high-speed running (3.1 m/s on Unitree Go1 with 0 collisions across 100 runs), a conservative recovery policy takes over under safety risk, and a learned reach-avoid value function gates between them. ABS's specific result (3.1 m/s, 0 collisions) validates the dual-policy pattern; the general pattern — one policy for capability, one for safety, a learned arbiter — recurs across frontier production stacks.

Fallback controllers. When learned inference fails or detects out-of-distribution observations, a classical controller assumes command. For locomotion, the fallback is typically a whole-body QP with a conservative balance target (Chapter 2). For whole-body motion, the fallback is typically a slower but statically-stable trajectory. The pattern is explicit in Boston Dynamics's hybrid MPC+RL architecture (Chapter 11): the MPC is not the primary controller in post-2024 Atlas work, but it is always-available as the fallback that the RL arbitrates against. The fallback's presence is the reason industrial deployment permits learned policies at all — if the learned policy can fail gracefully into a controller with provable guarantees, the safety case is tractable.

7.7 Verdict and open questions

Catalyst 4 verdict: partially solved. The three strategies co-exist and stack. For locomotion, the problem is closed: domain randomization at GPU-parallel scale produces policies robust across the simulable distribution; system identification with actuator networks aligns the simulator for each embodiment; delta-action residual correction (ASAP) handles the last few percent. Seo et al.'s 15-minute sim-to-real walking result [15] illustrates the closure — for locomotion, sim-to-real is now a 15-minute engineering task, not a research problem.

For contact-rich manipulation, the problem is unsolved:

- No delta-action equivalent has been demonstrated for bimanual object manipulation. ASAP's residual correction works because locomotion's contact is primarily foot-ground, which is well-covered by simulator contact models plus DR. Manipulation's contact is fingertip-to-object at small contact patches with material-dependent friction, and the residual structure is qualitatively different.

- OmniRetarget-style interaction-mesh approaches [17] work when object meshes are available. In factory manipulation, mesh availability is a manufacturing-specific asset (Korea's automotive and semiconductor sectors have CAD for most parts); in home manipulation, it is not.

- Cross-embodiment sim-to-real — a policy trained for Unitree G1 deployed on Figure 03 — remains an open problem. Figure's "visual proprioception model" for end-effector 6D self-calibration (Chapter 12) is the closest public attempt; no generalized cross-embodiment sim-to-real pattern is commoditized.

Each of the three open frontiers above maps to one or more of the differentiation axes Chapter 15 develops. Chapter 15's argument that Korean manufacturing is unusually well-positioned for the manipulation-sim-to-real problem is grounded in the specific coincidence: Korea has the mesh assets (CAD archives), the deployment environments (bounded-variance factories), and the capex justification (declining labor supply) that let a factory-first manipulation-sim-to-real program compound in ways a home-first program cannot.

7.8 Bridge to Part III

Parts I and II are now complete. Part I audited the orthodox stack (Ch01), its survivors into the learning era (Ch02), and the paradigm-shift map (Ch03). Part II traced the four catalysts: QDD hardware (Ch04), GPU-parallel simulation (Ch05), the teacher-student learning canon (Ch06), and sim-to-real correction (Ch07). Each catalyst closes with a verdict; the aggregate verdict is the Chapter 3 §3.4 table, which Chapter 15 will re-open in service of the differentiation-axis argument.

Part III takes up the stack the catalysts enable. Chapter 8 provides the modern theory primer — RL, Transformers, diffusion policy, VLA concepts — that a reader needs before the architectural synthesis of Chapter 9 and the VLA chapter of Chapter 10. Readers whose classical-controls background was covered in Chapter 2 will use Chapter 8 as its paired companion; readers who arrived with modern RL background will use Chapter 2 as theirs.

References

- Tobin, J., Fong, R., Ray, A., Schneider, J., Zaremba, W., & Abbeel, P. (2017). Domain randomization for transferring deep neural networks from simulation to the real world. Proc. IROS. arXiv:1703.06907.

- OpenAI et al. (2019). Learning dexterous in-hand manipulation. International Journal of Robotics Research. arXiv:1808.00177.

- Hwangbo, J., Lee, J., Dosovitskiy, A., Bellicoso, D., Tsounis, V., Koltun, V., & Hutter, M. (2019). Learning agile and dynamic motor skills for legged robots. Science Robotics. arXiv:1901.08652.

- Akkaya, I., Andrychowicz, M., et al. (2019). Solving Rubik's cube with a robot hand. arXiv preprint 1910.07113.

- Lee, J., Hwangbo, J., Wellhausen, L., Koltun, V., & Hutter, M. (2020). Learning quadrupedal locomotion over challenging terrain. Science Robotics. arXiv:2010.11251.

- Kumar, A., Fu, Z., Pathak, D., & Malik, J. (2021). RMA: Rapid motor adaptation for legged robots. Proc. RSS. arXiv:2107.04034.

- Dao, J., Duan, H., Apgar, T., & Hurst, J. (2022). Sim-to-real learning of all common bipedal gaits via periodic reward composition. Proc. IEEE ICRA. arXiv:2011.01387.

- Handa, A., et al. (2023). DeXtreme: Transfer of agile in-hand manipulation from simulation to reality. Proc. IEEE ICRA. arXiv:2210.13702.

- He, T., et al. (2024). Agile but safe (ABS): Learning collision-free high-speed legged locomotion. Proc. RSS. arXiv:2401.17583.

- Gu, X., Zhang, Y., Wu, K., et al. (2024). Humanoid-Gym: Reinforcement learning for humanoid robot with zero-shot sim2real transfer. arXiv preprint 2404.05695.

- Radosavovic, I., et al. (2024). Real-world humanoid locomotion with reinforcement learning. Science Robotics. arXiv:2303.03381.

- Gu, X., et al. (2025). Advancing humanoid locomotion: Mastering challenging terrains with denoising world model learning. arXiv preprint 2408.14472.

- Wang, Y., et al. (2025). Booster Gym: An end-to-end reinforcement learning framework for humanoid robot locomotion. arXiv preprint 2506.15132.

- He, T., et al. (2025). ASAP: Aligning simulation and real-world physics for learning agile humanoid whole-body skills. Proc. RSS. arXiv:2502.01143.

- Seo, S., et al. (2025). Learning sim-to-real humanoid locomotion in 15 minutes. arXiv preprint 2512.01996.

- Yao, X., et al. (2025). Real2Sim2Real: Self-supervised system identification for zero-shot humanoid deployment. arXiv preprint 2506.12769.

- Yang, H., et al. (2025). OmniRetarget: Interaction-preserving data generation for humanoid whole-body loco-manipulation and scene interaction. arXiv preprint 2509.26633.

- Agility Robotics. (2025). Motor Cortex: An always-on safety layer for Digit. https://agilityrobotics.com

- Zhuang, Z., et al. (2024). Humanoid parkour learning. Proc. CoRL. arXiv:2406.10759.

- Zakka, K., et al. (2025). MuJoCo Playground: An open-source framework for GPU-accelerated robot learning and sim-to-real transfer. arXiv preprint 2502.08844.