Chapter 10: VLA and Loco-Manipulation Integration

10.1 What VLA asks of the humanoid stack

A Vision-Language-Action (VLA) model takes an image observation and a natural-language instruction and emits a sequence of robot actions. As a sentence, the claim is unambitious. As a system, it is the 2023–2026 frontier's load-bearing architectural bet: locomotion (Chapter 6) and the three-layer architecture (Chapter 9) are the precondition, and a single policy absorbing vision, language, and loco-manipulation is the integration thesis that makes the post-catalyst stack economically interesting.

Chapter 8 §8.7 provided the minimum VLA vocabulary. This chapter develops the category: what VLA systems exist, how they are architected, what cross-embodiment generalization looks like in practice, how each dominant company stack occupies the VLA design space, and what remains open. The chapter is intentionally the third-densest of the book (after Ch06 and Ch12); the VLA literature in 2024–2026 exploded, and the responsibility of a survey is to compress without losing the structural comparisons.

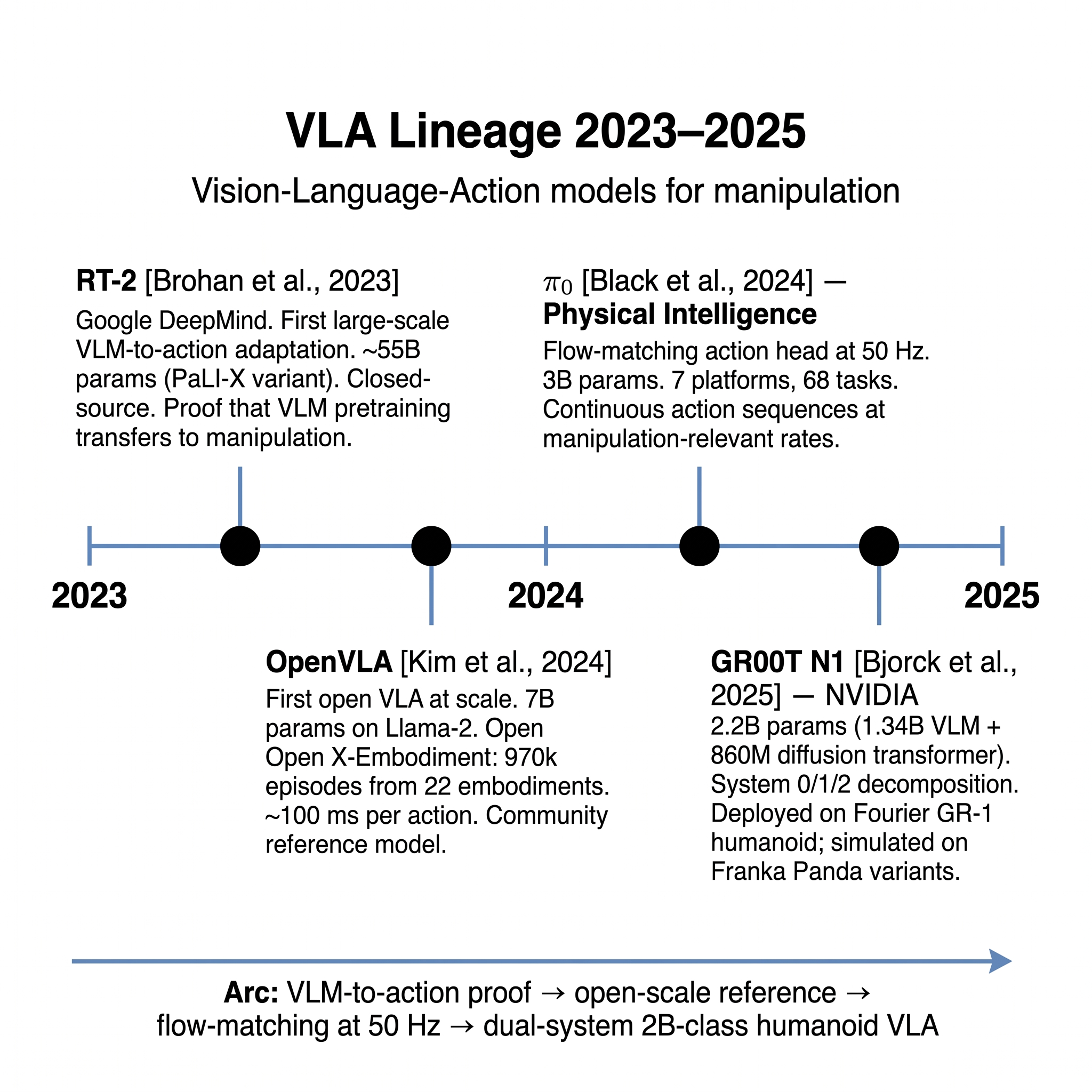

The chapter proceeds through six claims. First (§10.2), it traces the lineage from RT-2 in 2023 to the 2026 frontier, establishing the architectural genealogy. Second (§10.3), it examines the four canonical open-source VLAs — RT-2, OpenVLA, π0, GR00T N1/N1.5 — in comparative detail. Third (§10.4), it surveys the two largest closed or partially-closed production VLAs — Figure Helix and AgiBot GO-1/GO-2 — and notes where public disclosure allows comparison. Fourth (§10.5), it takes up the loco-manipulation integration question — how VLAs that grew from manipulation (RT-2 lineage) and from locomotion (Helix, TWIST) are converging. Fifth (§10.6), it examines cross-embodiment generalization as the key economic-validity question. Sixth (§10.7), it addresses action-decoder design — diffusion, flow matching, tokenized actions — and the FAST action-tokenization work that compressed inference cost at scale. §10.8 surveys the data-scale question, §10.9 covers Toyota Research Institute's Large Behavior Model, §10.10 discusses the research surveys that complement this chapter, and §10.11 closes with the Part III verdict and open questions.

10.2 The VLA lineage — RT-2 to 2026 frontier

The VLA category as currently understood begins with Google DeepMind's RT-2 [6]. RT-2's architectural move was to take a pretrained vision-language model (PaLI / PaLM-E family) and co-train it on web-scale VQA data plus a robotic-action corpus discretized into tokens that the same model could predict. The observation was striking: a VLM that had seen internet-scale image-text pairs, when fine-tuned on robot trajectories, generalized to novel objects and novel language instructions that pure robotic-action models could not. RT-2 established that vision-language pretraining transfers into manipulation without the robotic-action fine-tune destroying the pretrained knowledge.

RT-2 was closed-source and its training data was proprietary. OpenVLA [18] released the first fully open VLA at comparable scale. Built on Llama-2 with a vision encoder, OpenVLA is 7 billion parameters, trained on the Open X-Embodiment (OXE) dataset [3] spanning approximately 970,000 episodes from 22 robot embodiments. The action head discretizes joint-space commands into tokens the autoregressive decoder predicts; inference is approximately 100 ms per action on a consumer GPU. OpenVLA was the first open VLA to demonstrate cross-embodiment generalization at scale.

π0 from Physical Intelligence [19] introduced the flow-matching action head to VLAs: rather than predict discrete action tokens, the action head is a flow-matching model (Chapter 8 §8.6) that generates continuous action sequences at 50 Hz. π0 trains on a mixed corpus of seven robot platforms and 68 tasks; the architectural contribution is showing that manipulation-relevant frequencies (50 Hz) are achievable from a VLA backbone without compromising the benefits of VLM pretraining. π0's successor π0.5 [22] extends the approach to open-world generalization, and π0-FAST [23] combines π0 with the FAST action-tokenization work (§10.7) to retain autoregressive efficiency.

NVIDIA GR00T N1 and N1.5 [20] reframe the VLA as a dual-system architecture, explicitly mapping onto the System 0/1/2 decomposition (Chapter 9). N1 is 2.2 billion parameters total: a 1.34-billion-parameter VLM as System 2 plus an 860-million-parameter diffusion transformer as System 1. GR00T trains on a mixture of simulated rollouts, neural-network-generated trajectories, prior MPC rollouts, and real-robot teleoperation data, and is deployed in the real world on the Fourier GR-1 humanoid, with simulation-only evaluations extending to Franka Panda arm variants (single-arm and bimanual). GR00T N1.5 reports approximately 10% improvement over N1 with similar parameter count — architectural and data refinements rather than a scale step-up.

The RT-2 → OpenVLA → π0 → GR00T N1 sequence defines the open-and-semi-open VLA lineage. The closed-source production VLAs — Figure Helix and AgiBot GO-1/GO-2 — sit alongside this lineage and occupy the frontier in different ways (§10.4).

[6] (Google DeepMind, ~55B params, VLM-to-action proof) → OpenVLA [18] (7B open-source reference, Open X-Embodiment, 970k episodes) → π₀ [19] (Physical Intelligence, flow-matching at 50 Hz, 3B params) → GR00T N1 [20] (NVIDIA, 2.2B dual-system VLM + diffusion, Fourier GR-1 deployment). Paradigm arc: VLM-to-action proof → open-scale reference → flow-matching at 50 Hz → dual-system 2B-class humanoid VLA. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch10_vla_lineage_timeline.png'" style="cursor:zoom-in">

[6] (Google DeepMind, ~55B params, VLM-to-action proof) → OpenVLA [18] (7B open-source reference, Open X-Embodiment, 970k episodes) → π₀ [19] (Physical Intelligence, flow-matching at 50 Hz, 3B params) → GR00T N1 [20] (NVIDIA, 2.2B dual-system VLM + diffusion, Fourier GR-1 deployment). Paradigm arc: VLM-to-action proof → open-scale reference → flow-matching at 50 Hz → dual-system 2B-class humanoid VLA. Illustration by author (Gemini-assisted reconstruction)." loading="lazy" onerror="this.src='../assets/figures/ch10_vla_lineage_timeline.png'" style="cursor:zoom-in">

10.3 Four canonical open VLAs — comparative analysis

| System | Params | Action head | Frequency | Training data | Cross-embodiment scope |

|---|---|---|---|---|---|

| RT-2 [6] | ~55B (PaLI-X variant) | tokenized | ~1–3 Hz | Proprietary web + robotic mix | Single robot (Google's mobile manipulator) |

| OpenVLA [18] | 7B | tokenized | ~10 Hz (100 ms/action) | Open X-Embodiment (970k episodes, 22 embodiments) | Cross-embodiment by training |

| π0 [19] | 3B | flow matching | 50 Hz | 7 platforms, 68 tasks | 7 platforms |

| GR00T N1 [20] | 2.2B (1.34B VLM + 860M diffusion) | diffusion transformer | ~120 Hz head / ~10 Hz VLM (L40) | Sim + human video + teleop mix | Real-world: Fourier GR-1 humanoid; sim-only: Franka Panda arm variants |

Four observations fall out of the table.

Parameter-count has compressed over time. RT-2 used an extremely large multimodal backbone; subsequent VLAs are substantially smaller, with GR00T N1 under 10% of RT-2's scale. The compression reflects the field's growing confidence that VLM pretraining quality matters more than VLM parameter count for action tasks. Smaller backbones that are well-pretrained on images, text, and code can match RT-2's generalization at roughly one-twentieth the inference cost.

Action-head design has diverged. Tokenized actions (RT-2, OpenVLA) are simple and reuse the LM decoder; the trade-off is action resolution and multi-modality. Flow matching (π0) and diffusion (GR00T, Helix) handle multi-modal action distributions naturally at the cost of additional inference steps per action. The FAST action-tokenization work [24] partially closes this gap by demonstrating that carefully-designed action tokens can be efficient enough to match flow-matching throughput while retaining autoregressive simplicity.

Frequency has increased with each generation. RT-2's sub-Hz operation was acceptable for the mobile-manipulation domain it was evaluated on; humanoid whole-body control requires 10× higher frequencies. π0 at 50 Hz and GR00T at humanoid-class rates are the 2024+ answer; the rate is still slower than Chapter 9's System 1 target of 100–200 Hz, which is why production humanoid VLAs typically also have a faster System 1 / System 0 pair beneath them.

Cross-embodiment training is now the default. OpenVLA's 22 embodiments were an early-adopter bet; by 2025–2026, every serious VLA trains on multi-embodiment data. The unresolved question is whether training-set embodiment coverage translates to deployment-time generalization on unseen embodiments — the subject of §10.6.

10.4 Figure Helix and AgiBot GO-1/GO-2 — the production VLAs

Two closed-source production VLAs dominate 2025–2026 humanoid deployment.

Figure Helix [33] is the public reference for the "System 1 + System 2" VLA naming. Helix S2 is an onboard internet-pretrained VLM at 7–9 Hz; Helix S1 is a fast reactive visuomotor policy at 200 Hz mapping latent semantics from S2 plus visuals plus proprioception to continuous joint actions across the entire upper body. Helix was the first VLA reported to run fully onboard a low-power embedded GPU. Helix 02 [34] retains the two-layer naming and adds System 0 beneath (Chapter 9 §9.2): a 10-million-parameter whole-body controller at 1 kHz. The complete Helix 02 stack — 7B VLM + visuomotor policy + 10M System 0 — is documented as demonstrating 4 minutes of continuous dishwasher loading and 61 loco-manipulation primitives with no resets, with approximately four orders of magnitude dynamic range from millimeter fingertip motion to room-scale locomotion [34].

AgiBot GO-1 [31] is built around a Vision-Language-Latent-Action (ViLLA) architecture: a latent-planner (temporal reasoning) plus an action expert (robot control). GO-1 introduced the AgiBot World dataset [31], collected from 100 dual-arm humanoids across 5 deployment scenarios, covering 217 tasks and over 1 million trajectories. This is the largest public humanoid manipulation corpus. GO-1 reports +32% success over RDT baselines and +30% over Open X-Embodiment-trained policies on its evaluation suite [31]. AgiBot GO-2 [32] builds on GO-1 with an asynchronous dual-system architecture (Chapter 9 §9.1): low-frequency semantic planning at System 2 plus high-frequency action following at System 1, running on Genie Sim 3.0's decoupled physics-rendering pipeline. GO-2 is ACL 2026 accepted.

The Helix and GO-2 architectures differ on three axes. First, Figure operates on a single-company embodiment chain (Figure 02 → Figure 03), whereas AgiBot deliberately operates on a scaled humanoid fleet (100 humanoids for data collection). Second, Figure emphasizes onboard inference at all layers; AgiBot's asynchronous framing is more tolerant of communication with a larger compute plane. Third, Figure's data is teleoperation-heavy (reportedly under 500 hours in the 2025 Helix paper); AgiBot's data is collection-fleet-heavy (1 million trajectories via 100 humanoids). Each architecture's bets on compute-locality and data-gathering inform Chapter 12 and Chapter 13's detailed analyses.

10.5 Loco-manipulation integration

The VLA literature split for years between manipulation-from-RT2 and locomotion-from-Radosavovic lineages. The integration — whole-body policies that treat locomotion and manipulation as a single problem — is the 2024–2025 frontier.

Several systems anchor the integration.

Expressive Whole-Body Control (ExBody) [7] decouples upper-body tracking (mocap-derived) from lower-body locomotion (RL on rough terrain) on Unitree H1. The decoupling was a design choice for modularity; subsequent systems aim for tighter integration.

HumanPlus [8] does RGB-to-humanoid imitation: a single camera observes a human, pose estimation produces SMPL-X poses, retargeting maps to H1, a shadow-policy reward trains the humanoid in Isaac Gym. HumanPlus demonstrates whole-body tasks (boxing, typing, cloth folding, ball tossing) at 60–85% success with 40-demo fine-tune.

H2O and OmniH2O [11] extend to real-time teleoperation. H2O runs at approximately 50 ms latency with single-RGB teleop. OmniH2O adds 5-finger Inspire hands and universal retargeting; autonomous policies trained on 6 hours of teleop data reach 60–90% success.

TWIST [28] combines VR-style teleop with a causal-Transformer policy distilled from mocap and teleop; deployed on Unitree G1, it produces 25+ whole-body skills from a single policy and 120 N lateral push recovery.

HOVER [11] is the 2024 unified whole-body controller with 15+ distinct control modes at 200 Hz Jetson-class inference. Humanoid-VLA [36] pushes the VLA explicitly toward dynamic whole-body control rather than manipulation-only.

FALCON [29] adds force-adaptive whole-body loco-manipulation — a whole-body policy coupled with force-sensing arms for contact-rich tasks. FALCON is the closest public precedent to the contact-rich manipulation that Chapter 7 identified as the open sim-to-real frontier.

OmniRetarget [27] is the data-scale enabler: 8+ hours of feasible retargeted trajectories through interaction-mesh retargeting, supporting 30-second humanoid parkour with zero foot-skating (vs ~18% in baselines). OmniRetarget's contribution is upstream of the VLA itself — it produces the training data that whole-body VLAs benefit from.

Perceptive Pedipulation [12] illustrates a different integration direction: the foot used as a manipulator (pedipulation) with local obstacle avoidance. The concept generalizes beyond pedipulation to the broader point that limbs traditionally assigned to locomotion (legs, feet) and manipulation (arms, hands) are dynamically interchangeable in a whole-body VLA framework.

Visual Whole-Body Control [15] and Mobile ALOHA [16] are the bimanual-manipulation-plus-locomotion precedents; Mobile ALOHA's low-cost whole-body teleoperation at 2024 scale preceded most humanoid whole-body teleoperation.

The integration pattern emerging across these systems: locomotion is treated as a solved primitive (Part II verdict), manipulation rides on top via upper-body VLA, and the interface between the two is negotiated per-system. Figure Helix uses a single unified visuomotor policy; AgiBot uses a dual-system asynchronous split; HumanPlus/TWIST/HOVER use distillation from mocap or teleop; ExBody uses explicit decoupling. No one pattern has won yet.

10.6 Cross-embodiment generalization

The economic premise of VLAs at commercial scale is that a single policy can be amortized across many robot SKUs. If cross-embodiment transfer degrades success by >20% when deploying on non-training embodiments, the VLA story collapses into per-embodiment fine-tuning, which is a substantially weaker value proposition.

The public evidence on cross-embodiment generalization is mixed. OpenVLA trains on 22 OXE embodiments and shows non-trivial generalization to fresh manipulation tasks [18]. GR00T N1 deploys in the real world on a single humanoid embodiment (Fourier GR-1), with simulation-only cross-embodiment evaluations extending to Franka Panda arm variants — narrower cross-humanoid evidence than early coverage suggested [20]. π0 trains on 7 platforms including wheeled mobile manipulators and stationary arms; humanoid-specific cross-embodiment is not the primary evaluation [19]. Helix is demonstrated on Figure 02 and Figure 03, same-company near-identical kinematics [Figure, 2025; Figure, 2026]. OmniH2O explicitly flags "single embodiment — cross-embodiment not covered" as a limitation [11]. HOVER operates on a single embodiment with 15+ control modes [11].

This is the "cross-embodiment transfer is asserted but not systematically benchmarked" gap that critical-analyst's gaps.md Gap 4 identifies. The engineering remedies — latent-action representations (GO-1's ViLLA, PHC/PULSE motion latents), visual proprioception models (Figure's 6D end-effector self-calibration) — are partial solutions. The first rigorous public benchmark (N≥5 distinct humanoid embodiments with controlled-task suite) is probably 18–30 months out.

The strategic consequence for Part V: Korea's four differentiation axes (Chapter 15) explicitly include cross-embodiment transfer as axis 4. If the axis remains open into the late 2020s, Korean manufacturers can enter with domain-specific cross-embodiment work tuned to manufacturing SKU variety (automotive assembly-line robots + semiconductor fab robots + shipbuilding robots under one policy) and establish defensible positions.

10.7 Action decoders — diffusion, flow, tokens, FAST

The action decoder is the part of the VLA that converts multi-modal observation-language context into actual joint commands. Four approaches dominate.

Tokenized actions (RT-2, OpenVLA) discretize continuous action space into tokens that an autoregressive decoder predicts one at a time. The advantage is architectural simplicity — reuse the language-model decoder — and the disadvantage is that action resolution is bounded by token granularity and that sequential token generation is slow.

Diffusion action policies (Diffusion Policy [5], GR00T N1's diffusion transformer, Helix) generate action sequences by reversing a noising process. Multi-modal action distributions emerge naturally; inference costs O(denoising-steps) per action, typically 10–50 steps.

Flow matching (π0, π0.5) generates actions by integrating a learned velocity field from a base distribution to the action distribution. Training is often simpler than diffusion; inference can be faster (fewer integration steps needed for comparable quality).

FAST tokenization [24] is the 2025 bridge between autoregressive and continuous approaches. FAST compresses action sequences into a compact token vocabulary that preserves the structure diffusion would capture but retains autoregressive efficiency. π0-FAST [23] combines π0 with FAST to recover some of the efficiency advantages. SmolVLA [25] is the affordability-oriented variant — a small-model VLA optimized for edge deployment.

The design-space selection depends on what the VLA is for. For high-frequency whole-body control, fewer inference steps per action matters: flow matching and FAST are the 2025+ defaults. For open-world manipulation where multi-modal action distributions are essential (multiple valid grasps, multiple object-approach trajectories), diffusion remains strong. For cross-embodiment transfer where the action space is a moving target, tokenization provides a uniform abstraction that retraining partially absorbs.

ACT (Action Chunking with Transformers) [4] is the 2023 precursor that established action chunking — predicting multiple timesteps of action at once — as a standard VLA technique. ACT influenced both the diffusion-policy and FAST-tokenization lines.

Universal Manipulation Interface (UMI) [17] is a different axis of innovation: a hardware-agnostic data-collection tool (handheld gripper with camera) that captures in-the-wild human manipulation demonstrations, which then train robotic policies. UMI addresses the data-scarcity problem (§10.8) rather than the decoder-architecture problem.

10.8 Data scale for VLAs

VLAs inherit the scaling intuitions of language models: more data, better policy. The data-scale question for VLAs has three sub-questions.

Scale of real-robot data. The largest public humanoid-specific dataset is AgiBot World at approximately 1 million trajectories across 100 humanoids and 217 tasks [31]. Across all robotic platforms (OXE-scale), approximately 970,000 episodes are public [3]. Helix's reported 500-hour teleop corpus is substantially smaller but more consistent (same hardware, same tasks).

Scale of simulation data. GR00T N1 uses "neural-network-generated trajectories" in its training mix [20], and the AgiBot World paper notes simulation-generated trajectory augmentation. The gap between 1 M trajectories (real) and 100 M+ trajectories (simulated, achievable with Isaac Lab at scale) is substantial but simulated manipulation transfer remains limited by contact fidelity (Chapter 5 §5.5).

Scale of human-video data. Radosavovic et al.'s 2024-nexttoken [37] reports that adding human-video tokens improves push recovery by ~25% over RL-only baselines on humanoid locomotion. UMI [17] and prior Ego4D-style datasets are the scaling corpus. The question is whether human-video pretraining transfers to humanoid manipulation as effectively as it transfers to locomotion; current evidence is ambivalent.

The aggregate data question — what mix of real-robot, simulated, and human-video data produces the best VLA — is not yet settled. Current production stacks mix all three in proportions driven by engineering constraints (Figure's small-but-consistent teleop bet vs AgiBot's large-but-variable fleet-collection bet) rather than a principled scaling law. The first rigorous humanoid-VLA scaling-law paper is a near-term research opportunity (Chapter 8 §8.9).

10.9 Toyota Research Institute and Large Behavior Models

Toyota Research Institute's Large Behavior Model (LBM) [14] is the research-lab-scale program that occupies the S2 layer for Boston Dynamics's Electric Atlas (Chapter 11). LBM is built on Diffusion Policy [5] foundations and emphasizes multi-task learning, data-scaling experiments, and deployment on real hardware via TRI's research fleet. LBM's explicit framing is that "a single policy, pre-trained on diverse manipulation data, then lightly fine-tuned per task" is the deployable VLA recipe — a position close to OpenVLA and π0 and different from Figure's single-weight-set approach.

LBM is a case where the VLA research has not yet released a definitive public paper with all the training details; public disclosures are tech-talks and partial releases. LBM's significance is more institutional than architectural: it is TRI's Atlas collaboration that tests whether learned-VLA approaches scale to Boston Dynamics's hardware, and it is the S2 candidate for BD's hybrid-MPC+RL-plus-VLA pipeline.

10.10 Companion surveys

Three 2024–2025 surveys complement this chapter and are worth naming for readers who want technique catalog depth beyond the narrative this chapter provides.

Kroemer, Niekum, and Konidaris (2021) [1] is the canonical pre-VLA review of robot learning for manipulation; a good prerequisite if the reader is new to manipulation learning as a subfield.

Wolf et al. (2025) [35] surveys diffusion models for robotic manipulation at technique-catalog depth, covering the diffusion-policy lineage, flow matching, tokenization variants, and the 2024–2025 extensions.

Weinberg et al. (2024) [13] surveys learning-based approaches for robotic in-hand manipulation — the dexterous-manipulation subfield that is substantially harder than the whole-body manipulation most humanoid VLAs currently target.

Billard and Kragic (2019) [2] is the pre-deep-learning manipulation review — useful for the classical-to-modern bridge similar to how Kober 2013 bridges RL's pre-deep era to the modern canon.

10.11 Verdict and open questions

Part III verdict (the integrated Ch09 + Ch10 summary): solved architecture, partially-solved loco-manipulation. The System 0/1/2 architecture (Chapter 9) is the cross-company lingua franca for 2026 deployment. VLA models now exist at production quality (Figure Helix 02, AgiBot GO-2, NVIDIA GR00T N1.5) with documented whole-body loco-manipulation capability. The architecture is solved; the frontier is in the residual integration quality.

Three open questions close the chapter.

First, what does a rigorous cross-embodiment benchmark look like, and who will publish it? The benchmark is the single most important thing missing from the VLA literature. It will require coordinated access to at least 5 distinct humanoid embodiments (Unitree G1, Figure 03, Digit, GR-1, H1) with a controlled task suite. The benchmark is likely to come from a research consortium rather than any single company; academic-industry consortia (CoRL workshops, NeurIPS datasets track) are plausible vehicles.

Second, can contact-rich manipulation VLAs match manipulation-only VLAs in success rate? Whole-body policies that integrate locomotion and manipulation (FALCON, HOVER, Humanoid-VLA) are demonstrably capable but their manipulation success rates on contact-rich tasks lag purpose-built manipulation VLAs. The gap is the reason Chapter 15's differentiation-axis argument is load-bearing: contact-rich manufacturing tasks are exactly the regime where whole-body + force-sensing integration has not yet converged.

Third, is the BFM (Behavior Foundation Model) framing the right organizing principle for the next 2–3 years of VLA research? The BFM survey [36] argues yes. This chapter's framing — System 0/1/2 architecture with VLA-as-S1/S2-realization — is compatible with BFM but focuses on the deployment-architecture aspect rather than the pre-training-framework aspect. Both perspectives will continue to develop; the question is whether they merge into a unified "pretrained-BFM-in-System-0/1/2-architecture" framing or remain complementary.

10.12 Bridge to Part IV

Chapters 4–10 have audited the technological and architectural substrate. Part IV (Chapters 11–13) examines the companies that instantiate the substrate: Boston Dynamics's hybrid MPC+RL+LBM architecture (Ch11), the US challengers Figure and Agility with Tesla Optimus outlook (Ch12), and China's Unitree and AgiBot leaders (Ch13). Each chapter is a technical-strategic analysis — not a procurement guide or a financial model — that reads the company's public architecture through the lens Parts II and III established.

References

- Kroemer, O., Niekum, S., & Konidaris, G. (2021). A review of robot learning for manipulation: Challenges, representations, and algorithms. JMLR.

- Billard, A., & Kragic, D. (2019). Trends and challenges in robot manipulation. Science.

- Padalkar, A., et al. (2023). Open X-Embodiment: Robotic learning datasets and RT-X models. arXiv preprint.

- Zhao, T. Z., Kumar, V., Levine, S., & Finn, C. (2023). Learning fine-grained bimanual manipulation with low-cost hardware (ACT). Proc. RSS.

- Chi, C., et al. (2023). Diffusion policy: Visuomotor policy learning via action diffusion. Proc. RSS.

- Brohan, A., et al. (2023). RT-2: Vision-language-action models transfer web knowledge to robotic control. arXiv preprint.

- Cheng, X., et al. (2024). Expressive whole-body control for humanoid robots. Proc. RSS. arXiv:2402.16796.

- Fu, Z., et al. (2024). HumanPlus: Humanoid shadowing and imitation from humans. Proc. CoRL.

- He, T., et al. (2024). Learning human-to-humanoid real-time whole-body teleoperation (H2O). Proc. IEEE/RSJ IROS.

- He, T., et al. (2024). OmniH2O: Universal and dexterous human-to-humanoid whole-body teleoperation and learning. Proc. CoRL.

- He, T., et al. (2024). HOVER: Versatile neural whole-body controller for humanoid robots. Proc. IEEE ICRA 2025.

- Grandia, R., et al. (2024). Perceptive pedipulation with local obstacle avoidance.

- Weinberg, A., et al. (2024). Survey of learning-based approaches for robotic in-hand manipulation.

- Tedrake, R. (2024). Toyota Research Institute Large Behavior Models for robot manipulation. TRI presentations and partial releases.

- Liu, S., et al. (2024). Visual whole-body control for legged loco-manipulation.

- Wang, A., et al. (2024). Mobile ALOHA: Learning bimanual mobile manipulation with low-cost whole-body teleoperation.

- Ha, H., et al. (2024). Universal manipulation interface: In-the-wild robot teaching without in-the-wild data (UMI).

- Kim, M. J., et al. (2024). OpenVLA: An open-source vision-language-action model.

- Black, K., et al. (2024). π0: A vision-language-action flow model for general robot control.

- Bjorck, J., et al. (2025). GR00T N1: An open foundation model for generalist humanoid robots.

- NVIDIA. (2025). GR00T N1.5: Improved foundation model for generalist humanoid robots.

- Physical Intelligence. (2025). π0.5: A vision-language-action model with open-world generalization.

- Black, K., et al. (2025). π0-FAST: Autoregressive π0 with FAST tokenizer.

- Pertsch, K., et al. (2025). FAST: Efficient action tokenization for vision-language-action models.

- Shukla, S., et al. (2025). SmolVLA: A vision-language-action model for affordable and efficient robotics.

- Yang, H., et al. (2025). Humanoid-VLA: Generalist VLA with dynamic whole-body control.

- Yang, H., et al. (2025). OmniRetarget: Interaction-preserving data generation for humanoid whole-body loco-manipulation and scene interaction.

- Ze, Y., et al. (2025). TWIST: Teleoperated whole-body imitation system.

- Zhang, J., et al. (2025). FALCON: Learning force-adaptive humanoid loco-manipulation.

- AgiBot. (2025). AgiBot World Colosseo: A large-scale manipulation platform.

- AgiBot. (2025). AgiBot GO-1: ViLLA generalist embodied foundation model.

- AgiBot. (2026). AgiBot GO-2: Asynchronous dual-system architecture.

- Figure AI. (2025). Helix: A vision-language-action model for generalist humanoid control. Figure AI tech blog, February 2025.

- Figure AI. (2026). Figure 03 + Helix 02: General-purpose humanoid system.

- Wolf, R., Shi, Y., Liu, S., & Rayyes, R. (2025). Diffusion models for robotic manipulation: A survey.

- Yuan, M., et al. (2025). A survey of behavior foundation model: Next-generation whole-body control system of humanoid robots. arXiv preprint 2506.20487.

- Radosavovic, I., et al. (2024). Humanoid locomotion as next token prediction. NeurIPS. arXiv:2402.19469.